Preface. This is not a book review really, it’s more a few of my kindle notes. Heinberg writes so well, so clearly, that I am sure history will remember him as the most profound and wide-ranging expert on energy and ecological overshoot. Just a few of the topics in this book include:

- The depletion of important resources including fossil fuels and minerals

- The proliferation of environmental impacts arising from both the extraction and use of resources (including the burning of fossil fuels)—leading to snowballing costs from both these impacts themselves and from efforts to avert them and clean them up

- Financial disruptions due to the inability of our existing monetary, banking, and investment systems to adjust to both resource scarcity and soaring environmental costs—and their inability (in the context of a shrinking economy) to service the enormous piles of government and private debt that have been generated over the past couple of decades.

As always, I noted only what interested me. So much is left out, so do buy this book! And not just for yourself — I write a lot about why the electric grid will eventually come down for good in both of my Springer Books, so buy it for your grandchildren to preserve knowledge and so that future generations will understand why collapse happened.

Alice Friedemann www.energyskeptic.com author of 2021 Life After Fossil Fuels: A Reality Check on Alternative Energy best price here; 2015 When Trucks Stop Running: Energy and the Future of Transportation”, Barriers to Making Algal Biofuels, & “Crunch! Whole Grain Artisan Chips and Crackers”. Podcasts: Crazy Town, Collapse Chronicles, Derrick Jensen, Practical Prepping, KunstlerCast 253, KunstlerCast278, Peak Prosperity, XX2 report

***

Richard Heinberg. 2011. The End of Growth: Adapting to Our New Economic Reality. New Society Publishers.

The Deepwater Horizon incident also illustrates to some degree the knock-on effects of depletion and environmental damage upon financial institutions. Insurance companies have been forced to raise premiums on deepwater drilling operations, and impacts to regional fisheries have hit the Gulf Coast economy hard.

payments forced the company to reorganize and resulted in lower stock values and returns to investors. BP’s financial woes in turn impacted British pension funds that were invested in the company. This is just one event—admittedly a spectacular one. If it were an isolated problem, the economy could recover and move on. But we are, and will be, seeing a cavalcade of environmental and economic disasters, not obviously related to one another, that will stymie economic growth in more and more ways. These will include but are not limited to: • Climate change leading to regional droughts, floods, and even famines; • Shortages of water and energy; and • Waves of bank failures, company bankruptcies, and house foreclosures.

Each will be typically treated as a special case, a problem to be solved so that we can get “back to normal.” But in the final analysis, they are all related, in that they are consequences of growing human population striving for higher per-capita consumption of limited resources (including non-renewable, climate-altering fossil fuels), all on a finite and fragile planet.

The result: we are seeing a perfect storm of converging crises that together represent a watershed moment in the history of our species. We are witnesses to, and participants in, the transition from decades of economic growth to decades of economic contraction.

we are adding about 70 million new “consumers” each year. That makes further growth even more crucial: if the economy stagnates, there will be fewer goods and services per capita to go around.

We harnessed the energies of coal, oil, and natural gas to build and operate cars, trucks, highways, airports, airplanes, and electric grids—all the essential features of modern industrial society. Through the one-time-only process of extracting and burning hundreds of millions of years’ worth of chemically stored sunlight, we built what appeared (for a brief, shining moment) to be a perpetual-growth machine. We learned to take what was in fact an extraordinary situation for granted. It became normal.

But as the era of cheap, abundant fossil fuels comes to an end, our assumptions about continued expansion are being be shaken to their core. The end of growth is a very big deal indeed. It means the end of an era, and of our current ways of organizing economies, politics, and daily life. Without growth, we will have to virtually reinvent human life on Earth.

World leaders, if they are deluded about our actual situation, are likely to delay putting in place the support services that can make life in a non-growing economy survivable, and they will almost certainly fail to make needed, fundamental changes to monetary, financial, food, and transport systems. As a result, what could have been a painful but endurable process of adaptation could become history’s greatest tragedy. We can survive the end of growth, but only if we recognize it for what it is and act accordingly.

As early as 1998, petroleum geologists Colin Campbell and Jean Laherrère were discussing a Peak Oil impact scenario that went like this. Sometime around the year 2010, they theorized, stagnant or falling oil supplies would lead to soaring and more volatile petroleum prices, which would precipitate a global economic crash. This rapid economic contraction would in turn lead to sharply curtailed energy demand, so oil prices would then fall; but as soon as the economy regained strength, demand for oil would recover, prices would again soar, and as a result of that the economy would relapse. This cycle would continue, with each recovery phase being shorter and weaker, and each crash deeper and harder, until the economy was in ruins. Financial systems based on the assumption of continued growth would implode, causing more social havoc than the oil price spikes would themselves generate.

Meanwhile, volatile oil prices would frustrate investments in energy alternatives: one year, oil would be so expensive that almost any other energy source would look cheap by comparison; the next year, the price of oil would have fallen far enough that energy users would be flocking back to it, with investments in other energy sources looking foolish. But low oil prices would discourage exploration for more petroleum, leading to even worse fuel shortages later on. Investment capital would be in short supply in any case because the banks would be insolvent due to the crash, and governments would be broke due to declining tax revenues. Meanwhile, international competition for dwindling oil supplies might lead to wars between petroleum importing nations, between importers and exporters, and between rival factions within exporting nations.

But what happened next riveted the world’s attention to such a degree that the oil price spike was all but forgotten: in September 2008, the global financial system nearly collapsed. The reasons for this sudden, gripping crisis apparently had to do with housing bubbles, lack of proper regulation of the banking industry, and the over-use of bizarre financial products that almost nobody understood. However, the oil price spike had played a critical (if largely overlooked) role in initiating the economic meltdown

In the immediate aftermath of that global financial near-death experience, both the Peak Oil impact scenario proposed a decade earlier and the Limits to Growth standard-run scenario of 1972 seemed to be confirmed with uncanny and frightening accuracy. Global trade was falling. The world’s largest auto companies were on life support. The U.S. airline industry had shrunk by almost a quarter. Food riots were erupting in poor nations around the world. Lingering wars in Iraq (the nation with the world’s second-largest crude oil reserves) and Afghanistan (the site of disputed oil and gas pipeline projects) continued to bleed the coffers of the world’s foremost oil-importing nation.

Meanwhile, the debate about what to do to rein in global climate change exemplified the political inertia that had kept the world on track for calamity since the early ’70s. It had by now become obvious to nearly every person of modest education and intellect that the world has two urgent, incontrovertible reasons to rapidly end its reliance on fossil fuels: the twin threats of climate catastrophe and impending constraints to fuel supplies. Yet at the Copenhagen climate conference in December, 2009, the priorities of the most fuel-dependent nations were clear: carbon emissions should be cut, and fossil fuel dependency reduced, but only if doing so does not threaten economic growth.

We must convince ourselves that life in a non-growing economy can be fulfilling, interesting, and secure. The absence of growth does not necessarily imply a lack of change or improvement. Within a non-growing or equilibrium economy there can still be continuous development of practical skills, artistic expression, and certain kinds of technology. In fact, some historians and social scientists argue that life in an equilibrium economy can be superior to life in a fast-growing economy: while growth creates opportunities for some, it also typically intensifies competition—there are big winners and big losers, and (as in most boom towns) the quality of relations within the community can suffer as a result. Within a non-growing economy it is possible to maximize benefits and reduce factors leading to decay, but doing so will require pursuing appropriate goals: instead of more, we must strive for better; rather than promoting increased economic activity for its own sake, we must emphasize whatever increases quality of life without stoking consumption. One way to do this is to reinvent and redefine growth itself.

“Classical” economic philosophers such as Adam Smith (1723–1790), Thomas Robert Malthus (1766–1834), and David Ricardo (1772–1823) introduced basic concepts such as supply and demand, division of labor, and the balance of international trade.

These pioneers set out to discover natural laws in the day-to-day workings of economies. They were striving, that is, to make of economics a science admired the ability of physicists, biologists, and astronomers to demonstrate the fallacy of old church doctrines, and to establish new universal “laws” by means of inquiry and experiment.

Economic philosophers, for their part, could point to price as arbiter of supply and demand, acting everywhere to allocate resources far more effectively than any human manager or bureaucrat could ever possibly do—surely this was a principle as universal and impersonal as the force of gravitation!

The classical theorists gradually adopted the math and some of the terminology of science. Unfortunately, however, they were unable to incorporate into economics the basic

Economic theory required no falsifiable hypotheses and demanded no repeatable controlled experiments. Economists began to think of themselves as scientists, while in fact their discipline remained a branch of moral philosophy—as it largely does to this day.

Importantly, these early philosophers had some inkling of natural limits and anticipated an eventual end to economic growth. The essential ingredients of the economy were understood to consist of labor, land, and capital. There was on Earth only so much land (which in these theorists’ minds stood for all natural resources), so of course at some point the expansion of the economy would cease. Both Malthus and Smith explicitly held this view. A somewhat later economic philosopher, John Stuart Mill (1806-1873), put the matter as follows: “It must always have been seen, more or less distinctly, by political economists, that the increase in wealth is not boundless: that at the end of what they term the progressive state lies the stationary state…”

But starting with Adam Smith, the idea that continuous “improvement” in the human condition was possible came to be generally accepted.

A key to this transformation was the gradual deletion by economists of land from the theoretical primary ingredients of the economy (increasingly, only labor and capital really mattered—land having been demoted to a sub-category of capital). This was one of the refinements that turned classical economic theory into neoclassical economics; others included the theories of utility maximization and rational choice.

While this shift began in the 19th century, it reached its fruition in the 20th through the work of economists who explored models of imperfect competition, and theories of market forms and industrial organization, while emphasizing tools such as the marginal revenue curve (this is when economics came to be known as “the dismal science”—partly because its terminology was, perhaps intentionally, increasingly mind-numbing). Meanwhile, however, the most influential economist of the 19th century, a philosopher named Karl Marx, had thrown a metaphorical bomb through the window of the house that Adam Smith had built. In his most important book, Das Kapital, Marx proposed a name for the economic system that had evolved since the Middle Ages: capitalism. It was a system founded on capital. Many people assume that capital is simply another word for money, but that entirely misses the essential point: capital is wealth—money, land, buildings, or machinery—that has been set aside for production of more wealth. If you use your entire weekly paycheck for rent, groceries, and other necessities, you may have money but no capital. But even if you are deeply in debt, if you own stocks or bonds, or a computer that you use for a home-based business, you have capital. Capitalism, as Marx defined it, is a system in which productive wealth is privately owned. Communism (which Marx proposed as an alternative) is one in which productive wealth is owned by the community, or by the nation on behalf of the people. In any case, Marx said, capital tends to grow.

Marx also wrote that capitalism is inherently unsustainable, in that when the workers become sufficiently impoverished by the capitalists, they will rise up and overthrow their bosses and establish a communist state (or, eventually, a stateless workers’ paradise). The ruthless capitalism of the 19th century resulted in booms and busts, and a great increase in inequality of wealth—and therefore an increase in social unrest. With the depression of 1893 and the crash of 1907, and finally the Great Depression of the 1930s, it appeared to many social commentators of the time that capitalism was indeed failing, and that Marx-inspired uprisings were inevitable; the Bolshevik revolt in 1917 served as a stark confirmation of those hopes or fears (depending on one’s point of view).

The next few decades saw a three-way contest between the Keynesian social liberals, the followers of Marx, and temporarily marginalized neoclassical or neoliberal economists who insisted that social reforms and Keynesian meddling by government with interest rates, spending, and borrowing merely impeded the ultimate efficiency of the free Market.

the fall of the Soviet Union at the end of the 1980s, Marxism ceased to have much of a credible voice in economics. Its virtual disappearance from the discussion created space for the rapid rise of the neoliberals, who for some time had been drawing energy from widespread reactions against the repression and inefficiencies of state-run economies. Margaret Thatcher and Ronald Reagan both relied heavily on advice from neoliberal economists of the Chicago School

One of the most influential libertarian, free-market economists of recent decades was Alan Greenspan (b. 1926), who, as U.S. Federal Reserve Chairman from 1987 to 2006, argued for privatization of state-owned enterprises and de-regulation of businesses—yet Greenspan nevertheless ran an activist Fed that expanded the nation’s money supply in ways and to degrees that neither Friedman or Hayek would have approved of. As

There is a saying now in Russia: Marx was wrong in everything he said about communism, but he was right in everything he wrote about capitalism. Since the 1980s, the nearly worldwide re-embrace of classical economic philosophy has predictably led to increasing inequalities of wealth within the U.S. and other nations, and to more frequent and severe economic bubbles and crashes. Which brings us to the global crisis that began in 2008. By this time all mainstream economists (Keynesians and neoliberals alike) had come to assume that perpetual growth is the rational and achievable goal of national economies. The discussion was only about how to maintain it—through government intervention or a laissez-faire approach that assumes the Market always knows best. But

It is clearly a challenge to the neoliberals, whose deregulatory policies were largely responsible for creating the housing bubble whose implosion is generally credited with stoking the crisis. But it is a conundrum also for the Keynesians, whose stimulus packages have failed in their aim of increasing employment and general economic activity. What we have, then, is a crisis not just of the economy, but also of economic theory and philosophy.

The ideological clash between Keynesians and neoliberals (represented to a certain degree in the escalating all-out warfare between the U.S. Democratic and Republican political parties) will no doubt continue and even intensify. But the ensuing heat of battle will yield little light if both philosophies conceal the same fundamental errors. One such error is of course the belief that economies can and should perpetually grow. But that error rests on another that is deeper and subtler. The subsuming of land within the category of capital by nearly all post-classical economists had amounted to a declaration that Nature is merely a subset of the human economy—an endless pile of resources to be transformed into wealth. It also meant that natural resources could always be substituted with some other form of capital—money or technology. The reality, of course, is that the human economy exists within, and entirely depends upon Nature, and many natural resources have no realistic substitutes. This fundamental logical and philosophical mistake, embedded at the very heart of modern mainstream economic philosophies, set society directly upon a course toward the current era of climate change and resource depletion, and its persistence makes conventional economic theories—of both Keynesian and neoliberal varieties—utterly incapable of dealing with the economic and environmental survival threats to civilization in the 21st century.

For help, we can look to the ecological and biophysical economists, whose ideas have been thoroughly marginalized by the high priests and gatekeepers of mainstream economics—and, spectacular growth of debt—in obvious and subtle forms—that has occurred during the past few decades. That phenomenon in turn must be seen in light of the business cycles that characterize economic activity in modern industrial societies, and the central banks that have been set up to manage them.

We’ve already noted how nations learned to support the fossil fuel-stoked growth of their physical economies by increasing their money supply via fractional reserve banking. As money was gradually (and finally completely) de-linked from physical substance (i.e., precious metals), the creation of money became tied to the making of loans by commercial banks.

This meant that the supply of money was entirely elastic—as much could be created as was needed, and the amount in circulation could contract as well as expand. And the growth of money was tied to the growth of debt. The system is dynamic and unstable, and this instability manifests in the business cycle. In the expansionary phase of the cycle, businesses see the future as rosy, and therefore take out loans to build more productive capacity and hire new workers. Because many businesses are doing this at the same time, the pool of available workers shrinks; so, to attract and keep the best workers, businesses have to raise wages. With wages rising, worker-consumers have more money in their pockets. Worker-consumers spend much of that money on products from the businesses that hire them, helping spread even more optimism about the future. Amid all this euphoria, worker-consumers go into debt based on the expectation that their wages will continue to grow, making it easy to repay loans. Businesses go into debt expanding their productive capacity. Real estate prices go up because of rising demand (former renters deciding they can now afford to buy), which means that houses are worth more as collateral if existing homeowners want to take out big loans to do some remodeling or to buy a new car. All of this borrowing and spending increases the money supply and the velocity of money. At some point, however, the overall mood of the country changes. Businesses have invested in as much productive capacity as they are likely to need for a while. They feel they have taken on as much debt as they can handle, and don’t feel the need to hire more employees. Upward pressure on wages ceases, and that helps dampen the general sense of optimism about the economy. Workers likewise become shy about taking on more debt, as they are unsure whether they will be able to make payments. Instead, they concentrate on paying off existing debts. With fewer loans being written, less new money is being created; meanwhile, as earlier loans are paid off, money effectively disappears from the system. The nation’s money supply contracts in a self-reinforcing spiral. But if people increase their savings during this downward segment of the cycle, they eventually will feel more secure and therefore more willing to begin spending again. Also, businesses will eventually have liquidated much of their surplus productive capacity and thereby reduced their debt burden. This sets the stage for the next expansion phase.

A bubble consists of trade in high volumes at prices that are considerably at odds with intrinsic values, but the word can also be used more broadly to refer to any instance of rapid expansion of currency or credit that’s not sustainable over the long run. Bubbles always end with a crash—a rapid, sharp decline in asset values.

The upsides and downsides of the business cycle are reflected in higher or lower levels of inflation. Inflation is often defined in terms of higher wages and prices, but (as the Austrian economists have persuasively argued) wage and price inflation is actually just the symptom of an increase in the money supply relative to the amounts of goods and services being traded, which in turn is typically the result of exuberant borrowing and spending. The downside of the business cycle, in the worst instance, can produce the opposite of inflation, or deflation. Deflation manifests as declining wages and prices, consequent upon a declining money supply relative to goods and services traded, due to a contraction of borrowing and spending.

As we have seen, bubbles are a phenomenon generally tied to speculative investing. But in a larger sense our entire economy has assumed the characteristics of a bubble—even a Ponzi scheme. That is because it has come to depend upon staggering and continually expanding amounts of debt: government and private debt; debt in the trillions, and tens of trillions, and hundreds of trillions of dollars; debt that, in aggregate, has grown by 500 percent since 1980; debt that has grown faster than economic output (measured in GDP) in all but one of the past 50 years; debt that can never be repaid; debt that represents claims on quantities of labor and resources that simply do not exist.

Looking at the problem close up, the globalization of the economy looms as a prominent factor. In the 1970s and ’80s, with stiffer environmental and labor standards to contend with domestically, corporations began eyeing the regulatory vacuum, cheap labor, and relatively untouched natural resource base of less-industrialized nations as a potential goldmine. International investment banks started loaning poor nations enormous sums to pay for ill-advised infrastructure projects (and, incidentally, to pay kickbacks to corrupt local politicians), later requiring these countries to liquidate their natural resources at fire-sale prices so as to come up with the cash required to make loan payments. Then, prodded by corporate interests, industrialized nations pressed for the liberalization of trade rules via the World Trade Organization (the new rules almost always subtly favored the wealthier trading partner).

All of this led predictably to a reduction of manufacturing and resource extraction in core industrial nations, especially the U.S. (many important resources were becoming depleted in the wealthy industrial nations anyway), and a steep increase in resource extraction and manufacturing in several “developing” nations, principally China. Reductions in domestic manufacturing and resource extraction in turn motivated investors within industrial nations to seek profits through purely financial means. As a result of these trends, there are now as many Americans employed in manufacturing as there were in 1940, when the nation’s population was roughly half what it is today—while the proportion of total U.S. economic activity deriving from financial services has tripled during the same period. And speculative investing has become an accepted practice that is taught in top universities and institutionalized in the world’s largest corporations.

The most important financial development during the 1970s was the growth of securitization—a financial practice of pooling various types of contractual debt (such as residential mortgages, commercial mortgages, auto loans, or credit card debt obligations) and selling it to investors in the form of bonds, pass-through securities, or collateralized mortgage obligations (CMOs). The principal and interest on the debts underlying the security are paid back to investors regularly. Securitization provided an avenue for more investors to fund more debt. In effect, securitization caused (or allowed) claims on wealth to increase far above previous levels.

In 1970 the top 100 CEOs earned about $45 for every dollar earned by the average worker; by 2008 the ratio was over 1,000 to one.

In the 1990s, as the surplus of financial capital continued to grow, investment banks began inventing a slew of new securities with high yields. In assessing these new products, rating agencies used mathematical models that, in retrospect, seriously underestimated their levels of risk. Until the early 1970s, bond credit ratings agencies had been paid for their work by investors who wanted impartial information on the credit worthiness of securities issuers and their various offerings. Starting in the early 1970s, the “Big Three” ratings agencies (Standard & Poors, Moody’s, and Fitch) were paid instead by the securities issuers for whom they issued those ratings. This eventually led to ratings agencies actively encouraging the issuance of collateralized debt obligations (CDOs). The Clinton administration adopted “affordable housing” as one of its explicit goals (this didn’t mean lowering house prices; it meant helping Americans get into debt), and over the decade the percentage of Americans owning their homes increased 7.8 percent. This initiated a persistent upward trend in real estate prices.

In the late 1990s investors piled into Internet-related stocks, creating a speculative bubble. The dot-com bubble burst in 2000 (as with all bubbles, it was only a matter of “when,” not “if”), and a year later the terrifying crimes of September 11, 2001 resulted in a four-day closure of U.S. stock exchanges and history’s largest one-day decline in the Dow Jones Industrial Average. These events together triggered a significant recession. Seeking to counter a deflationary trend, the Federal Reserve lowered its federal funds rate target from 6.5 percent to 1.0 percent, making borrowing more affordable. Downward pressure on interest rates was also coming from the nation’s high and rising trade deficit. Every nation’s balance of payments must sum to zero, so if a nation is running a current account deficit it must balance that amount by earning from foreign investments, by running down reserves, or by obtaining loans from other countries. In other words, a country that imports more than it exports must borrow to pay for those imports. Hence American imports had to be offset by large and growing amounts of foreign investment capital flowing into the U.S. Higher bond prices attract more investment capital, but there is an inevitable inverse relationship between bond prices and interest rates, so trade deficits tend to force interest rates down. Foreign investors had plenty of funds to lend, either because they had very high personal savings rates (in China, up to 40 percent of income saved), or because of high oil prices (think OPEC). A torrent of funds—it’s been called a “Giant Pool of Money” that roughly doubled in size from 2000 to 2007, reaching $70 trillion—was flowing into the U.S. financial markets. While foreign governments were purchasing U.S. Treasury bonds, thus avoiding much of the impact of the eventual crash, other foreign investors, including pension funds,

By this time a largely unregulated “shadow banking system,” made up of hedge funds, money market funds, investment banks, pension funds, and other lightly-regulated entities, had become critical to the credit markets and was underpinning the financial system as a whole. But the shadow “banks” tended to borrow short-term in liquid markets to purchase long-term, illiquid, and risky assets, profiting on the difference between lower short-term rates and higher long-term rates. This meant that any disruption in credit markets would result in rapid deleveraging, forcing these entities to sell long-term assets (such as MBSs) at depressed prices.

Between 1997 and 2006, the price of the typical American house increased by 124%.

People bragged that their houses were earning more than they were, believing that the bloating of house values represented a flow of real money that could be tapped essentially forever. In a sense this money was being stolen from the next generation: younger first-time buyers had to burden themselves with unmanageable debt in order to enter the market, while older homeowners who bought before the bubble were able to sell, downsize, and live on the profit.

For a brief time between 2006 and mid-2008, investors fled toward futures contracts in oil, metals, and food, driving up commodities prices worldwide. Food riots erupted in many poor nations, where the cost of wheat and rice doubled or tripled. In part, the boom was based on a fundamental economic trend: demand for commodities was growing—due in part to the expansion of economies in China, India, and Brazil—while supply growth was lagging. But speculation forced prices higher and faster than physical shortage could account for. For Western economies, soaring oil prices had a sharp recessionary impact, with already cash-strapped new homeowners now having to spend eighty to a hundred dollars every time they filled the tank in their SUV. The auto, airline, shipping, and trucking industries were sent reeling.

The U.S. real estate bubble of the early 2000s was the largest (in terms of the amount of capital involved) in history. And its crash carried an eerie echo of the 1930s: Austrian and Post-Keynesian economists have argued that it wasn’t the stock market crash that drove the Great Depression so much as farm failures making it impossible for farmers to make mortgage payments—along with housing bubbles in Florida, New York, and Chicago.

Real estate bubbles are essentially credit bubbles, because property owners generally use borrowed money to purchase property (this is in contrast to currency bubbles, in which nations inflate their currency to pay off government debt). The amount of outstanding debt soars as buyers flood the market, bidding property prices up to unrealistic levels and taking out loans they cannot repay. Too many houses and offices are built, and materials and labor are wasted in building them. Real estate bubbles also lead to an excess of homebuilders, who must retrain and retool when the bubble bursts. These kinds of bubbles lead to systemic crises affecting the economic integrity of nations. Indeed, the housing bubble of the early 2000s had become the oxygen of the U.S. economy—the source of jobs, the foundation for Wall Street’s recovery from the dot-com bust, the attractant for foreign capital, the basis for household wealth accumulation and spending. Its bursting changed everything.

And there is reason to think it has not fully deflated: commercial real estate may be waiting to exhale next. Over the next five years, about $1.4 trillion in commercial real estate loans will reach the end of their terms and require new financing. Commercial property values have fallen more than 40 percent nationally since their 2007 peak, so nearly half the loans are underwater. Vacancy rates are up and rents are down. The impact of the real estate crisis on banks is profound, and goes far beyond defaults upon outstanding mortgage contracts: systemic dependence on MBSs, CDOs, and derivatives means many of the banks, including the largest, are effectively insolvent and unable to take on more risk (we’ll see why in more detail in the next section). The demographics are not promising for a recovery of the housing market anytime soon: the oldest of the Baby Boomers are 65 and entering retirement. Few have substantial savings; many had hoped to fund their golden years with house equity—and to realize that, they must sell. This will add more houses to an already glutted market, driving prices down even further.

With regard to debt, what are those limits likely to be and how close are we to hitting them? There are practical limits to debt within such a system, and those limits are likely to show up in somewhat different ways for each of the four categories of debt indicated in the graph. With government debt, problems arise when required interest payments become a substantial fraction of tax revenues. Currently for the U.S., the total Federal budget amounts to about $3.5 trillion, of which 12 percent (or $414 billion) goes toward interest payments. But in 2009, tax revenues amounted to only $2.1 trillion; thus interest payments currently consume almost 20 percent, or nearly one-fifth, of tax revenues.

By the time the debt reaches $20 trillion, roughly ten years from now, interest payments may constitute the largest Federal budget outlay category, eclipsing even military expenditures. If Federal tax revenues haven’t increased by that time, Federal government debt interest payments will be consuming 20 percent of them.

Once 100 percent of tax revenues have to go toward interest payments and all government operations have to be funded with more borrowing—on which still more interest will have to be paid—the system will have arrived at a kind of financial singularity: a black hole of debt, if you will. But in all likelihood we would not have to get to that ultimate impasse before serious problems appear.

Many economic wags suggest that when government has to spend 30 percent of tax receipts on interest payments, the country is in a debt trap from which there is no easy escape. Given current trajectories of government borrowing and interest rates, that 30 percent mark could be hit in just a few years. Even before then, U.S. credit worthiness and interest costs will take a beating.

However, some argue that limits to government debt (due to snowballing interest payments) need not be a hard constraint—especially for a large nation, like the U.S., that controls its own currency. The United States government is constitutionally empowered to create money, including creating money to pay the interest on its debts. Or, the government could in effect loan the money to itself via its central bank, which would then rebate interest payments back to the Treasury (this is in fact what the Treasury and Fed are doing with Quantitative Easing 2,

The most obvious complication that might arise is this: If at some point general confidence that external U.S. government debt (i.e., money owed to private borrowers or other nations) could be repaid with debt of equal “value” were deeply and widely shaken, potential buyers of that debt might decide to keep their money under the metaphorical mattress (using it to buy factories or oilfields instead), even if doing so posed its own set of problems. Then the Fed would become virtually the only available buyer of government debt, which might eventually undermine confidence in the currency, possibly igniting a rapid spiral of refusal that would end only when the currency failed. There are plenty of historic examples of currency failures, so this would not be a unique occurrence.

But as long as deficit spending doesn’t exceed certain bounds, and as long as the economy resumes growth in the not-too-distant future, then it can be sustained for quite some time. Ponzi schemes theoretically can continue forever—if the number of potential participants is infinite. The absolute size of government debt is not necessarily a critical factor, as long as future growth will be sufficient so that the proportion of debt relative to revenues remains the same. Even an increase in that proportion is not necessarily cause for alarm, as long as it is only temporary. This, at any rate, is the Keynesian argument. Keynesians would also point out that government debt is only one category of total debt, and that U.S. government debt hasn’t grown proportionally relative to other categories of debt to any alarming degree (until the current recession).

Baby Boomers (the most numerous demographic cohort in the nation’s history, encompassing 70 million Americans) are reaching retirement age, which means that their lifetime spending cycle has peaked. It’s not that Boomers won’t continue to buy things (everybody has to eat), but their aggregate spending is unlikely to increase, given that cohort members’ savings are, on average, inadequate for retirement (one-third of them have no savings whatever). Out of necessity, Boomers will be saving more from now on, and spending less. And that won’t help the economy grow.

When demand for products declines, corporations aren’t inclined to borrow to increase their productive capacity. Even corporate borrowing aimed at increasing financial leverage has limits. Too much corporate debt reduces resiliency during slow periods—and the future is looking slow for as far as the eye can see. Durable goods orders are down, housing starts and new home sales are down, savings are up. As a result, banks don’t want to lend to companies, because the risk of default on such loans is now perceived as being higher than it was a few years ago; in addition, the banks are reluctant to take on more risk of any sort given the fact that many of the assets on their balance sheets consist of now-worthless derivatives and CDOs. Meanwhile, ironically and perhaps surprisingly, U.S. corporations are sitting on over a trillion dollars because they cannot identify profitable investment opportunities and because they want to hang onto whatever cash they have in anticipation of continued hard times. If only we could get to the next upside business cycle, then more corporate debt would be justified for both lenders and borrowers. But so far confidence in the future is still weak.

One of the main reforms enacted during the Great Depression, contained in the Glass Steagall Act of 1933, was a requirement that commercial banks refrain from acting as investment banks. In other words, they were prohibited from dealing in stocks, bonds, and derivatives. This prohibition was based on an implicit understanding that there should be some sort of firewall within the financial system separating productive investment from pure speculation, or gambling. This firewall was eliminated by the passage of the Gramm–Leach–Bliley Act of 1999 (for which the financial services industry lobbied tirelessly). As a result, all large U.S. banks have for the past decade become deeply engaged in speculative investment, using both their own and their clients’ money. With derivatives, since there is no requirement to own the underlying asset, and since there is often no requirement of evidence of ability to cover the bet, there is no effective limit to the amount that can be wagered. It’s true that many derivatives largely cancel each other out, and that their ostensible purpose is to reduce financial risk. Nevertheless, if a contract is settled, somebody has to pay—unless they can’t.

In the heady years of the 2000s, even the largest and most prestigious banks engaged in what can only be termed criminally fraudulent behavior on a massive scale. As revealed in sworn Congressional testimony, firms including Goldman Sachs deliberately created flawed securities and sold tens of billions of dollars’ worth of them to investors, then took out many more billions of dollars’ worth of derivatives contracts essentially betting against the securities they themselves had designed and sold. They were quite simply defrauding their customers, which included foreign and domestic pension funds. To date, no senior executive with any bank or financial services firm has been prosecuted for running these scams. Instead, most of the key figures are continuing to amass immense personal fortunes, confident no doubt that what they were doing—and in many cases continue to do—is merely a natural extension of the inherent logic of their industry. The degree and concentration of exposure on the part of the biggest banks with regard to derivatives was and is remarkable: as of 2005, JP Morgan Chase, Bank of America, Citibank, Wachovia, and HSBC together accounted for 96 percent of the $100 trillion of derivatives contracts held by 836 U.S. banks.

Even though many derivatives were insurance against default, or wagers that a particular company would fail, to a large degree they constituted a giant bet that the economy as a whole would continue to grow (and, more specifically, that the value of real estate would continue to climb). So when the economy stopped growing, and the real estate bubble began to deflate, this triggered a systemic unraveling that could be halted (and only temporarily) by massive government intervention.

Suddenly “assets” in the form of derivative contracts that had a stated value on banks’ ledgers were clearly worth much less. If these assets had to be sold, or if they were “marked to market” (valued on the books at the amount they could actually sell for), the banks would be shown to be insolvent. Government bailouts essentially enabled the banks to keep those assets hidden, so that banks could appear solvent and continue carrying on business. Despite the proliferation of derivatives, the financial system still largely revolves around the timeworn practice of receiving deposits and making loans. Bank loans are the source of money in our modern economy. If the banks go away, so does the rest of the economy.

But as we have just seen, many banks are probably actually insolvent because of the many near-worthless derivative contracts and bad mortgage loans they count as assets on their balance sheets. One might well ask: If commercial banks have the power to create money, why can’t they just write off these bad assets and carry on? Ellen Brown explains the point succinctly in her useful book Web of Debt: [U]nder the accountancy rules of commercial banks, all banks are obliged to balance their books, making their assets equal their liabilities. They can create all the money they can find borrowers for, but if the money isn’t paid back, the banks have to record a loss; and when they cancel or write off debt, their assets fall. To balance their books . . . they have to take the money either from profits or from funds invested by the bank’s owners [i.e., shareholders]; and if the loss is more than its owners can profitably sustain, the bank will have to close its doors.

So, given their exposure via derivatives, bad real estate loans, and MBSs, the banks aren’t making new loans because they can’t take on more risk. The only way to reduce that risk is for government to guarantee the loans. Again, as long as the down-side of this business cycle is short, such a plan could work in principle. But whether it actually will in the current situation is problematic. As noted above, Ponzi schemes can theoretically go on forever, as long as the number of new investors is infinite. Yet in the real world the number of potential investors is always finite. There are limits. And when those limits are hit, Ponzi schemes can unravel very quickly.

The shadow banks can still write more derivative contracts, but that doesn’t do anything to help the real economy and just spreads risk throughout the system. That leaves government, which (if it controls its own currency and can fend off attacks from speculators) can continue to run large deficits, and the central banks, which can enable those deficits by purchasing government debt outright—but unless such efforts succeed in jump-starting growth in the other sectors, that is just a temporary end-game strategy.

Remember: in a system in which money is created through bank loans, there is never enough money in existence to pay back all debts with interest. The system only continues to function as long as it is growing. So, what happens to this mountain of debt in the absence of economic growth? Answer: Some kind of debt crisis. And that is what we are seeing. Debt crises have occurred frequently throughout the history of civilizations, beginning long before the invention of fractional reserve banking and credit cards. Many societies learned to solve the problem with a “debt jubilee”: According to the Book of Leviticus in the Bible, every fiftieth year is a Jubilee Year, in which slaves and prisoners are to be freed and debts are to be forgiven.

For householders facing unaffordable mortgage payments or a punishing level of credit card debt, a jubilee may sound like a capitol idea. But what would that actually mean today, if carried out on a massive scale—when debt has become the very fabric of the economy? Remember: we have created an economic machine that needs debt like a car needs gas. Realistically, we are unlikely to see a general debt jubilee in coming years; what we will see instead are defaults and bankruptcies that accomplish essentially the same thing—the destruction of debt. Which, in an economy like ours, effectively means a destruction of wealth and claims upon wealth. Debt will have to be written off in enormous amounts—by the trillions of dollars. Over the short term, government will attempt to stanch this flood of debt-shedding in the household, corporate, and financial sectors by taking on more debt of its own—but eventually it simply won’t be able to keep up, given the inherent limits on government borrowing discussed above. We began with the question, “How close are we to hitting the limits to debt?” The evident answer is: we have already probably hit realistic limits to household debt and corporate debt; the ratio of U.S. total debt-to-GDP is probably near or past the danger mark; and limits to government debt may be within sight, though that conclusion is more controversial and doubtful.

For the U.S., actions undertaken by the Federal government and the Federal Reserve bank system have so far resulted in totals of $3 trillion actually spent and $11 trillion committed as guarantees. Some of these actions are discussed below; for a complete tally of the expenditures and commitments, see the online CNN Bailout Tracker.

The New Deal had cost somewhere between $450 and $500 billion and had increased government’s share of the national economy from 4 percent to 10 percent. ARRA represented a much larger outlay that was spent over a much shorter period, and increased government’s share of the economy from 20 percent to 25 percent.

At the end of 2010, President Obama and congressional leaders negotiated a compromise package of extended and new tax cuts that, in total, would reduce potential government revenues by an estimated $858 billion. This was, in effect, a third stimulus package.

Critics of the stimulus packages argued that transitory benefits to the economy had been purchased by raising government debt to frightening levels. Proponents of the packages answered that, had government not acted so boldly, an economic crisis might have turned into complete and utter ruin.

While the U.S. government stimulus packages were enormous in scale, the actions of the Federal Reserve dwarfed them in terms of dollar amounts committed. During the past three years, the Fed’s balance sheet has swollen to more than $2 trillion through its buying of bank and government debt. Actual expenditures included $29 billion for the Bear Sterns bailout; $149.7 billion to buy debt from Fannie Mae and Freddie Mac; $775.6 billion to buy mortgage-backed securities, also from Fannie and Freddie; and $109.5 billion to buy hard-to-sell assets (including (MBSs) from banks. However, the Fed committed itself to trillions more in insuring banks against losses, loaning to money market funds, and loaning to banks to purchase commercial paper. Altogether, these outlays and commitments totaled a minimum of $6.4 trillion.

Documents released by the Fed on December 1, 2010 showed that more than $9 trillion in total had been supplied to Wall Street firms, commercial banks, foreign banks, and corporations, with Citigroup, Morgan Stanley, and Merrill Lynch borrowing sums that cumulatively totaled over $6 trillion. The collateral for these loans was undisclosed but widely thought to be stocks, CDSs, CDOs, and other securities of dubious value. In one of its most significant and controversial programs, known as “quantitative easing,” the Fed twice expanded its balance sheet substantially, first by buying mortgage-backed securities from banks, then by purchasing outstanding Federal government debt (bonds and Treasury certificates) to support the Treasury debt market and help keep interest rates down on consumer loans. The Fed essentially creates money on the spot for this purpose (though no money is literally “printed”), thus monetizing U.S. government debt.

In November 2008 China announced a stimulus package totaling 4 trillion yuan ($586 billion) as an attempt to minimize the impact of the global financial crisis on its domestic economy. In proportion to the size of China’s economy, this was a much larger stimulus package than that of the U.S. Public infrastructure development made up the largest portion, nearly 38 percent, followed by earthquake reconstruction, funding for social welfare plans, rural development, and technology advancement programs.

What’s the bottom line on all these stimulus and bailout efforts? In the U.S., $12 trillion of total household net worth disappeared in 2008, and there will likely be more losses ahead, largely as a result of continued fall in real estate values though increasingly as a result of job losses as well. The government’s stimulus efforts, totaling less than $1 trillion, cannot hope to make up for this historic evaporation of wealth. While indirect subsidies may temporarily keep home prices from falling further, that just keeps houses less affordable to workers making less income. Meanwhile, the bailouts of banks and shadow banks have been characterized as government throwing money at financial problems it cannot solve, rewarding the very people who created them. Rather than being motivated by the suffering of American homeowners or governments in over their heads, the bailouts of Fannie Mae and Freddie Mac in the U.S., and Greece and Ireland in the E.U. were (according to critics) essentially geared toward securing the investments of the banks and the wealthy bonds holders.

The stimulus-bailout efforts of 2008-2009—which in the U.S. cut interest rates from 5 percent to zero, spent up the budget deficit to 10 percent of GDP, and guaranteed $6.4 trillion to shore up the financial system—arguably cannot be repeated. These constituted quite simply the largest commitments of funds in world history, dwarfing the total amounts spent in all the wars of the 20th century in inflation-adjusted terms (for the U.S., the cost of World War II amounted to $3.2 trillion). Not only the U.S., but Japan and the European nations as well have exhausted their arsenals. But more will be needed as countries, states, counties, and cities near bankruptcy due to declining tax revenues. Meanwhile the U.S. has lost 8.4 million jobs—and if loss of hours worked is considered that adds the equivalent of another 3 million; the nation will need to generate an extra 450,000 jobs each month for three years to get back to pre-crisis levels of employment. The only way these problems can be allayed (not fixed) is through more central bank money creation and government spending.

Once a credit bubble has inflated, the eventual correction (which entails destruction of credit and assets) is of greater magnitude than government’s ability to spend. The cycle must sooner or later play itself out. There may be a few more arrows in the quiver of economic policy makers: central bankers could try to drive down the value of domestic currencies to stimulate exports; the Fed could also engage in more quantitative easing. But these measures will sooner or later merely undermine currencies

Further, the way the Fed at first employed quantitative easing in 2009 was minimally productive.

QE1 amounted to adding about a trillion dollars to banks’ balance sheets, with the assumption that banks would then use this money as a basis for making loans.[2] The “multiplier effect” (in which banks make loans in amounts many times the size of deposits) should theoretically have resulted in the creation of roughly $9 trillion within the economy. However, this did not happen: because there was reduced demand for loans (companies didn’t want to expand in a recession and families didn’t want to take on more debt), the banks just sat on this extra capital. A better result could arguably have been obtained if the Fed were somehow to have distributed the same amount of money directly to debtors, rather than to banks, because then at least the money would either have circulated to pay for necessities, or helped to reduce the general debt overhang.

QE2 was about funding Federal government debt interest-free. Because the Federal Reserve rebates its profits (after deducting expenses) to the Treasury, creating money to buy government debt obligations is an effective way of increasing that debt without increasing interest payments. Critics describe this as the government “printing money” and assert that it is highly inflationary; however, given the extremely deflationary context (trillions of dollars’ worth of write-downs in collateral and credit), the Fed would have to “print” far more than it is doing to result in real inflation. Nevertheless, as we will see in Chapter 5 in a discussion of “currency wars,” other nations view this strategy as a way to drive down the dollar so as to decrease the value of foreign-held dollar-denominated debt—in effect forcing them to pay for America’s financial folly.

Central banks and governments are barely keeping the wheels on society, but their actions come with severe long-term costs and risks. And what they can actually accomplish is most likely limited anyway.

Deflation represents a disappearance of credit and money, so that whatever money remains has increased purchasing power. Once the bubble began to burst back in 2007-2008, say the deflationists, a process of contraction began that inevitably must continue to the point where debt service is manageable and prices for assets such as homes and stocks are compelling based on long-term historical trends. However, many deflationists tend to agree that the inflationists are probably right in the long run: at some point, perhaps several years from now, some future U.S. administration will resort to truly extraordinary means to avoid defaulting on interest payments on its ballooning debt, as well as to avert social disintegration and restart economic activity. There are several scenarios by which this might happen—including government simply printing money in enormous quantities and distributing it directly to banks or citizens. The net effect would be the same in all cases: a currency collapse.

In general, what we are actually seeing so far is neither dramatic deflation nor hyperinflation. Despite the evaporation of trillions of dollars in wealth during the past four years, and despite government and central bank interventions with a potential nameplate value also running in the trillions of dollars, prices (which most economists regard as the signal of inflation or deflation) have remained fairly stable. That is not to say that the economy is doing well: the ongoing problems of unemployment, declining tax revenues, and business and bank failures are obvious to everyone. Rather, what seems to be happening is that the efforts of the U.S. Federal government and the Federal Reserve have temporarily more or less succeeded in balancing out the otherwise massively deflationary impacts of defaults, bankruptcies, and falling property values. With its new functions, the Fed is acting as the commercial bank of last resort, transferring debt (mostly in the form of MBSs and Treasuries) from the private sector to the public sector.

The Fed’s zero-interest-rate policy has given a huge hidden subsidy to banks by allowing them to borrow Fed money for nothing and then lend it to the government at a 3 percent interest rate. But this is still not inflationary, because Federal Reserve is merely picking up the slack left by the collapse of credit in the private sector. In effect, the nation’s government and its central bank are together becoming the lender of last resort and the borrower of last resort—and (via the military) increasingly also both the consumer of last resort and the employer of last resort.

While leaders will make every effort to portray this as a gradual return to growth, in fact the economy will be losing ground and will remain fragile, highly vulnerable to upsetting events that could take any of a hundred forms—including international conflict, terrorism, the bankruptcy of a large corporation or megabank, a sovereign debt event (such as a default by one of the European countries now lined up for bailouts), a food crisis, an energy shortage or temporary grid failure, an environmental disaster, a curtailment of government-Fed intervention based on a political shift in the makeup of Congress, or a currency war

Extreme social unrest would be an inevitable result of the gross injustice of requiring a majority of the population to forego promised entitlements and economic relief following the bailout of a small super-wealthy minority on Wall Street. Political opportunists can be counted on to exacerbate that unrest and channel it in ways utterly at odds with society’s long-term best interests. This is a toxic brew

Growth requires not just energy in the most general sense, but forms of energy with specific characteristics. After all, the Earth is constantly bathed in energy—indeed, the amount of solar energy that falls on Earth’s surface each hour is greater than the amount of fossil-fuel energy the world uses every year. But sunlight energy is diffuse and difficult to use directly. Economies need sources of energy that are concentrated and controllable, and that can be made to do useful work. From a short-term point of view, fossil fuels proved to be energy sources with highly desirable characteristics: they could be extracted from Earth’s crust quite cheaply (at least in the early days), they were portable, and they delivered a lot of energy per unit of weight and/or volume—in most instances, far more than the firewood that people had been accustomed to using.

2009 Post Carbon Institute and the International Forum on Globalization undertook a joint study to analyze 18 energy sources (from oil to tidal power) using 10 criteria (scalability, renewability, energy density, energy returned on energy invested, and so on).

(Searching for a Miracle: Net Energy Limits and the Fate of Industrial Societies),

Our conclusion was that there is no credible scenario in which alternative energy sources can entirely make up for fossil fuels as the latter deplete.

Given oil’s pivotal role in the economy, high prices did more than reduce demand, they had helped undermine the economy as a whole in the 1970s and again in 2008. Economist James Hamilton of the University of California, San Diego, has assembled a collection of studies showing a tight correlation between oil price spikes and recessions during the past 50 years. Seeing this correlation, every attentive economist should have forecast a steep recession beginning in 2008, as oil price soared.

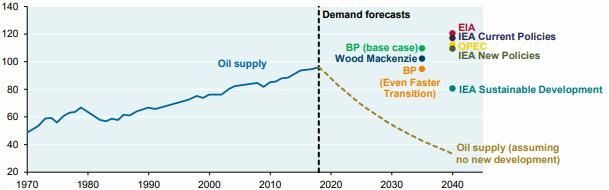

By mid-2009 the oil price had settled within the “Goldilocks” range—not too high (so as to kill the economy and, with it, fuel demand), and not too low (so as to scare away investment in future energy projects and thus reduce supply). That just-right price band appeared to be between $60 and $80 a barrel. How long prices can stay in or near the Goldilocks range is anyone’s guess but as declines in production in the world’s old super-giant oilfields continue to accelerate and exploration costs continue to mount, the lower boundary of that just-right range will inevitably continue to migrate upward. And while the world economy remains frail, its vulnerability to high energy prices is more pronounced, so that even $80-85 oil could gradually weaken it further, choking off signs of recovery. In other words, oil prices have effectively put a cap on economic recovery. This problem would not exist if the petroleum industry could just get busy and make a lot more oil, so that each unit would be cheaper. But despite its habitual use of the terms “produce” and “production,” the industry doesn’t make oil, it merely extracts the stuff from finite stores in the Earth’s crust. As we have already seen, the cheap, easy oil is gone. Economic growth is hitting the Peak Oil ceiling.

As more and more resources acquire the Goldilocks syndrome, general commodity prices will likely spike and crash repeatedly, making a hash of efforts to stabilize the economy.

There are three main solutions to the problem of Peak Phosphate: composting of human wastes, including urine diversion; more efficient application of fertilizer; and farming in such a way as to make existing soil phosphorus more accessible to plants.

It’s worth noting that for the past few decades a vocal minority of farmers, agricultural scientists, and food system theorists including Wendell Berry, Wes Jackson, Vandana Shiva, Robert Rodale, and Michael Pollan, has argued against centralization, industrialization, and globalization of agriculture, and for an ecological agriculture with minimal fossil fuel inputs. Where their ideas have taken root, the adaptation to Peak Oil and the end of growth will be easier. Unfortunately, their recommendations have not become mainstream, because industrialized, globalized agriculture has proved capable of producing larger short-term profits for banks and agribusiness cartels. Even more unfortunately, the available time for a large-scale, proactive food system transition before the impacts of Peak Oil and economic contraction arrive is gone. We’ve run out the clock. In his book, Dirt, David Montgomery makes a powerful case that soil erosion was a major cause of the Roman economy’s decline.

Data from the U.S. Geological Survey shows that within the U.S. many mineral resources are well past their peak rates of production.[4] These include bauxite (whose production peaked in 1943), copper (1998), iron ore (1951), magnesium (1966), phosphate rock (1980), potash (1967), rare earth metals (1984), tin (1945), titanium (1964), and zinc (1969).[5]

There are 17 rare earth elements (REEs) with names like lanthanum, neodymium, europium, and yttrium. They are critical to a variety of high-tech products including catalytic converters, color TV and flat panel displays, permanent magnets, batteries for hybrid and electric vehicles, and medical devices; to manufacturing processes like petroleum refining; and to various defense systems like missiles, jet engines, and satellite components. REEs are even used in making the giant electromagnets in modern wind turbines. But rare earth mines are failing to keep up with demand. China produces 97 percent of the world’s REEs, and has issued a series of contradictory public statements about whether, and in what amounts, it intends to continue exporting these elements.

Indium is used in indium tin oxide, which is a thin-film conductor in flat-panel television screens. Armin Reller, a materials chemist, and his colleagues at the University of Augsburg in Germany have been investigating the problem of indium depletion. Reller estimates that the world has, at best, 10 years before production begins to decline; known deposits will be exhausted by 2028, so new deposits will have to be found and developed. Some analysts are now suggesting that shortages of energy minerals including indium, REEs, and lithium for electric car batteries could trigger trade wars.

Armin Reller and his colleagues have also looked into gallium supplies. Discovered in 1831, Gallium is a blue-white metal with certain unusual properties, including a very low melting point and an unwillingness to oxidize. These make it useful as a coating for optical mirrors, a liquid seal in strongly heated apparatus, and a substitute for mercury in ultraviolet lamps. Gallium is also essential to making liquid-crystal displays in cell phones, flat-screen televisions, and computer monitors. With the explosive profusion of LCD displays in the past decade, supplies of gallium have become critical; Reller projects that by about 2017 existing sources will be exhausted.

Palladium (along with platinum and rhodium) is a primary component in the autocatalysts used in automobiles to reduce exhaust emissions. Palladium is also employed in the production of multi-layer ceramic capacitors in cellular telephones, personal and notebook computers, fax machines, and auto and home electronics. Russian stockpiles have been a key component in world palladium supply for years, but those stockpiles are nearing exhaustion, and prices for the metal have soared as a result.

Uranium is the fuel for nuclear power plants and is also used in nuclear weapons manufacturing; small amounts are employed in the leather and wood industries for stains and dyes, and as mordants of silk or wool. Depleted uranium is used in kinetic energy penetrator weapons and armor plating. In 2006, the Energy Watch Group of Germany studied world uranium supplies and issued a report concluding that, in its most optimistic scenario, the peak of world uranium production will be achieved before 2040. If large numbers of new nuclear power plants are constructed to offset the use of coal as an electricity source, then supplies will peak much sooner. Tantalum for cell phones. Helium for blimps. The list could go on. Perhaps it is not too much of an exaggeration to say that humanity is in the process of achieving Peak Everything.

Accidents and natural disasters have long histories; therefore it may seem peculiar at first to think that these could now suddenly become significant factors in choking off economic growth. However, two things have changed. First, growth in human population and proliferation of urban infrastructure are leading to ever more serious impacts from natural and human-caused disasters.

There are also limits to the environment’s ability to absorb the insults and waste products of civilization, and we are broaching those limits in ways that can produce impacts of a scale far beyond our ability to contain or mitigate. The billions of tons of carbon dioxide that our species has released into the atmosphere through the combustion of fossil fuels are not only changing the global climate but also causing the oceans to acidify. Indeed, the scale of our collective impact on the planet has grown to such an extent that many scientists contend that Earth has entered a new geologic era—the Anthropocene. Humanly generated threats to the environment’s ability to support civilization are now capable of overwhelming civilization’s ability to adapt and regroup.

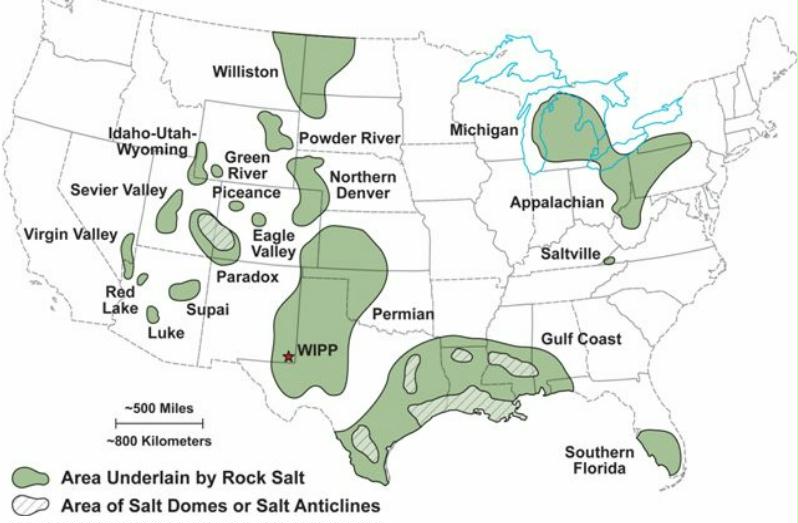

GDP impacts from the 2010 disasters were substantial. BP’s losses from the Deepwater Horizon gusher (which included cleanup costs and compensation to commercial fishers) have so far amounted to about $40 billion. The Pakistan floods caused damage estimated at $43 billion, while the financial toll of the Russian wildfires has been pegged at $15 billion.[4] Add in other events listed above, plus more not mentioned, and the total easily tops $150 billion for GDP losses in 2010 resulting from natural disasters and industrial accidents.[5] This does not include costs from ongoing environmental degradation (erosion of topsoil, loss of forests and fish species). How does this figure compare with annual GDP growth? Assuming world annual GDP of $58 trillion and an annual growth rate of three percent, annual GDP growth would amount to $1.74 trillion. Therefore natural disasters and industrial accidents, conservatively estimated, are already costing the equivalent of 8.6 percent of annual GDP growth. As resource extraction moves from higher-quality to lower-quality ores and deposits, we must expect worse environmental impacts and accidents along the way. There are several current or planned extraction projects in remote and/or environmentally sensitive regions that could each result in severe global impacts equaling or even surpassing the Deepwater Horizon blowout. These include oil drilling in the Beaufort and Chukchi Seas; oil drilling in the Arctic National Wildlife Refuge; coal mining in the Utukok River Upland, Arctic Alaska; tar sands production in Alberta; shale oil production in the Rocky Mountains; and mountaintop-removal coal mining in Appalachia.

Since climate is changing mostly because of the burning of fossil fuels, averting climate change is largely a matter of reducing fossil fuel consumption.[9] But as we have seen (and will confirm in more ways in the next chapter), economic growth depends on increasing energy consumption. Due to the inherent characteristics of alternative energy sources, it is extremely unlikely that society can increase its energy production while dramatically curtailing fossil fuel use.

Anther environmental impact that is relatively slow and ongoing and even more difficult to put a price tag on is the decline in the number of other species inhabiting our planet. According to one recent study, one in five plant species faces extinction as a result of climate change, deforestation, and urban growth.

Non-human species perform ecosystem services that only indirectly benefit our kind, but in ways that turn out to be crucial. Phytoplankton, for example, are not a direct food source for people, but comprise the base of oceanic food chains—in addition to supplying half of the oxygen produced each year by nature. The abundance of plankton in the world’s oceans has declined 40 percent since 1950, according to a recent study, for reasons not entirely clear. This is one of the main explanations for a gradual decline in atmospheric oxygen levels recorded worldwide. A 2010 study by Pavan Sukhdev, a former banker, to determine a price for the world’s environmental assets, concluded that the annual destruction of rainforests entails an ultimate cost to society of $4.5 trillion—$650 for each person on the planet. But that cost is not paid all at once; in fact, over the short term, forest cutting looks like an economic benefit as a result of the freeing up of agricultural land and the production of timber. Like financial debt, environmental costs tend to accumulate until a crisis occurs and systems collapse.

Declining oxygen levels, acidifying oceans, disappearing species, threatened oceanic food chains, changing climate—when considering planetary changes of this magnitude, it may seem that the end of economic growth is hardly the worst of humanity’s current problems. However, it is important to remember that we are counting on growth to enable us to solve or respond to environmental crises. With economic growth, we have surplus money with which to protect rainforests, save endangered species, and clean up after industrial accidents. Without economic growth, we are increasingly defenseless against environmental disasters—many of which paradoxically result from growth itself.

Talk of limits typically elicits dismissive references to the failed warnings of Thomas Malthus—the 18th century economist who reasoned that population growth would inevitably (and soon) outpace food production, leading to a general famine. Malthus was obviously wrong, at least in the short run: food production expanded throughout the 19th and 20th centuries to feed a fast-growing population. He failed to foresee the introduction of new hybrid crop varieties, chemical fertilizers, and the development of industrial farm machinery. The implication, whenever Malthus’s ghost is summoned, is that all claims that environmental limits will overtake growth are likewise wrong, and for similar reasons. New inventions and greater efficiency will always trump looming limits.

The main advantages of electrics are that their energy is used more efficiently (electric motors translate nearly all their energy into motive force, while internal combustion engines are much less efficient), they need less drive-train maintenance, and they are more environmentally benign (even if they’re running on coal-derived electricity, they usually entail lower carbon emissions due to their much higher energy efficiency). The drawbacks of electric vehicles have to do with the limited ability of batteries to store energy, as compared to conventional liquid fuels. Gasoline carries 45 megajoules per kilogram, while lithium-ion batteries can store only 0.5 MJ/kg. Improvements are possible, but the theoretical limit of chemical energy storage is still only about 3 MJ/kg. This is why we’ll never see battery-powered airliners: the batteries would be way too heavy to allow planes to get off the ground.

The low energy density (by weight) of batteries tends to limit the range of electric cars. This problem can be solved with hybrid power trains—using a gasoline engine to charge the batteries, as in the Chevy Volt, or to push the car directly part of the time, as with the Toyota Prius—but that adds complexity and expense.