Preface. Concentrated Solar Power (CSP) contributes only 0.06 % of U.S. electricity, mainly in California (64 %) and Arizona (24 %) because extremely dry areas with no humidity, haze, or pollutants are required. Of the 1861 MW power they can generate, only 25% of these plants can also store electricity using thermal energy storage. This is their only advantage over solar panels, the ability to continue to generate electricity after the sun goes down, since CSP costs astronomically more than solar PV.

Energy is stored as heat, usually in molten salt, with total CSP storage rated at 510 MW.

CSP is more capital expensive than any other power generation plant except nuclear. Eight plants cost a total of $9 billion (Solana, Genesis, Mojave, Ivanpah, Rice, Martin, Nevada solar 1, Crescent Dunes (NREL 2013).

Almost all CSP plants also have fossil backup to diminish night thermal losses, prevent molten salt from freezing, supplement low solar irradiance in the winter, and for fast starts in the morning.

CSP electricity generation in winter is significantly less than other seasons, even in the best range of latitudes between 15° and 35°.

To provide seasonal storage, CSP plants would need to use stone, which is much cheaper than molten salt. A 100 MW facility would need 5.1 million tons of rock taking up 2 million cubic meters (Welle 2010).

Since stone is a poor heat conductor, the thick insulating walls required might make this unaffordable (IEA 2011b).

Nevada’s 110 MW Crescent Dunes opened in 2015 with 10 hours of storage and is expected to provide an average of 0.001329 Twh a day. Multiply that by 8265 more Crescent Dune scale plants and presto, we’ll have one day of U.S. electrical storage (11.12/0.001329 TWh).

Or maybe not, the $1 billion dollar Crescent Dunes has gone out of business (Martin 2020).

CSP with thermal energy storage is seasonal, so it can not balance variable power or contribute much power for half the year.

Without storage, solar CSP and solar PV do nothing to keep the grid stable or meet the peak morning and late afternoon demand.

And it appears to be dying out, with just one CSP developer left (Deign 2020).

Alice Friedemann www.energyskeptic.com author of “When Trucks Stop Running: Energy and the Future of Transportation”, 2015, Springer, Barriers to Making Algal Biofuels, and “Crunch! Whole Grain Artisan Chips and Crackers”. Podcasts: Collapse Chronicles, Derrick Jensen, Practical Prepping, KunstlerCast 253, KunstlerCast278, Peak Prosperity , XX2 report

***

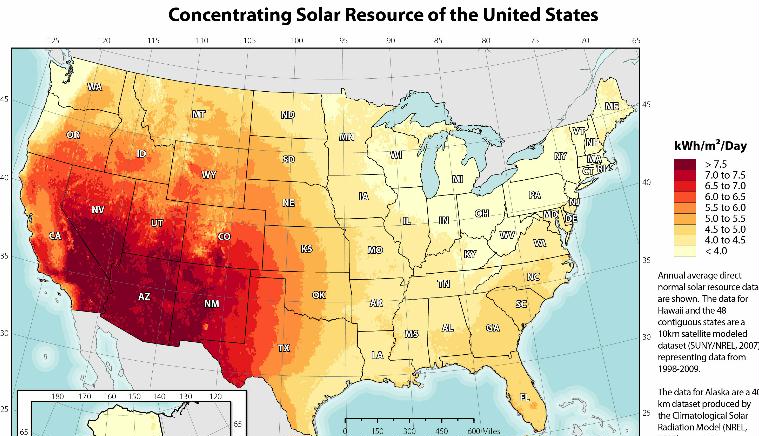

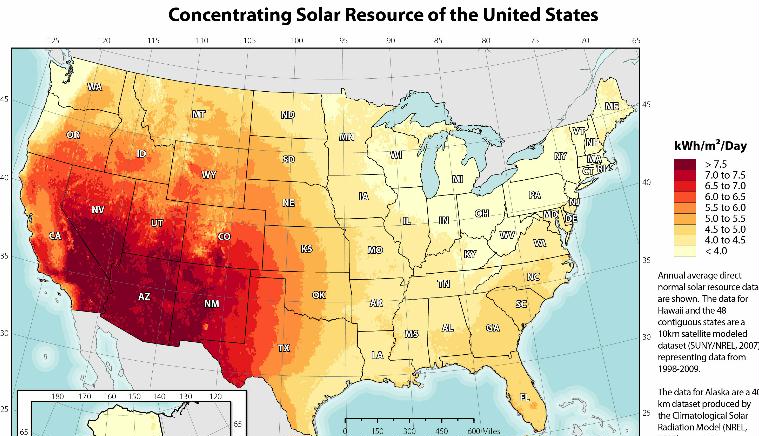

Concentrated Solar Power not only needs lots of sunshine, but no humidity, clouds, dust, smog or or anything else that can scatter the sun’s rays. Above 35 degrees latitude north or south, the sun’s rays have to pass through too much atmosphere to produce high levels of power, and these regions tend to be too cloudy as well. Between 15 degrees north and south of the equator is also not ideal, it’s too cloudy, rainy, and humid. That leaves very dry and hot regions in the 15-35 degrees of latitude. Only deserts are suitable, such as America’s Southwest, southern Africa, the Middle East, north-western India, northern Mexico, Peru, Chile, the western parts of China and Australia, the extreme south of Europe and Turkey, some central Asian countries, and places in Brazil and Argentina.

The problem with arid, dry regions is that CSP needs water for condenser cooling. Dry-cooling of steam turbines can be done but it costs more and lowers efficiency.

CSP doesn’t wean us totally from fossil fuels, nearly all use fossil fuel as back-up, to remain dispatchable even when the solar resource is low, and to guarantee an alternative thermal source that can compensate night thermal losses, prevent freezing and assure a faster start-up in the early morning.

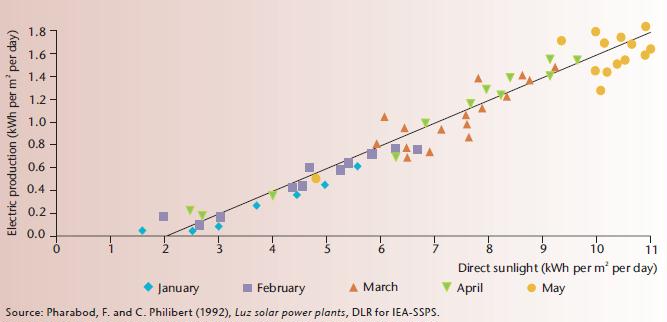

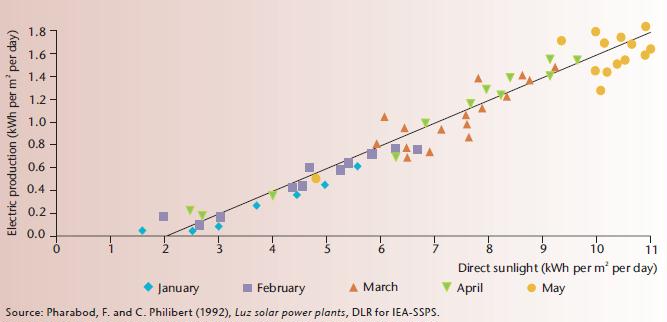

Even in ideal locations, CSP is highly seasonal:

The average CSP capacity factor in the United States in December 2014 was 5.5%, while in August it was 25% (EIA. 2015. Table 6.7.B. Capacity Factors for Utility Scale Generators Not Primarily Using Fossil Fuels, January 2008-November 2014. U.S. Energy Information Administration).

This means that CSP requires seasonal storage, since it provides almost nothing in winter, yet CSP with thermal energy storage (TES) IS one of the few ways even a few hours of energy storage can be accomplished, since there’s very limited pumped hydro storage, compressed air energy storge, and battery storage.

“Averages” are irrelevant. The seasonal nature of CSP with thermal storage makes balancing variable renewables and year-round power on a national grid — or even within the Southwest some days, weeks, or seasons — impossible without months of energy storage.

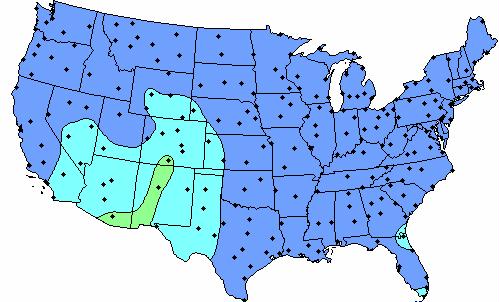

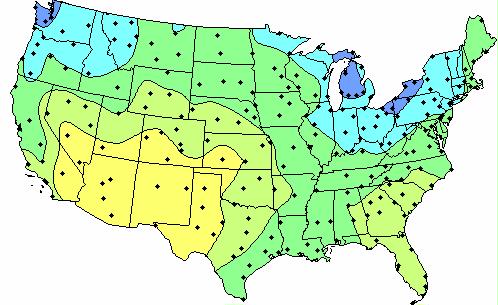

![Concentrating Solar Power Average Daily Solar Radiation Per Month, 1961]1990 (NREL 2011b)](https://energyskeptic.com/wp-content/uploads/2015/03/CSP-seasonal-6-USA-maps-jan-mar-may-jul-sep-nov.jpg)

Concentrating Solar Power Average Daily Solar Radiation Per Month, 1961-1990 (NREL 2011b)

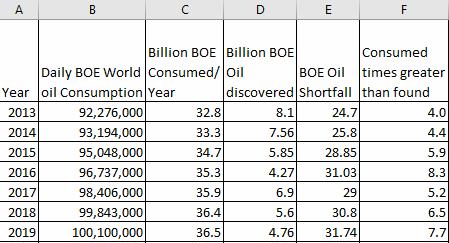

There will be days or weeks when solar radiation is very low. Below are some minimums and maximums for an East-West Axis Tracking Concentrator Daily solar radiation per month (NREL 2011b).

January minimum

January maximum

July minimum

July maximum

This means, for example, that in central Nevada may reach 10 kWh/m2/day or higher during July, but January average values may be as low as 3 kWh/m2/day, or even zero on a daily basis as a result of cloud cover (NREL 2011a).

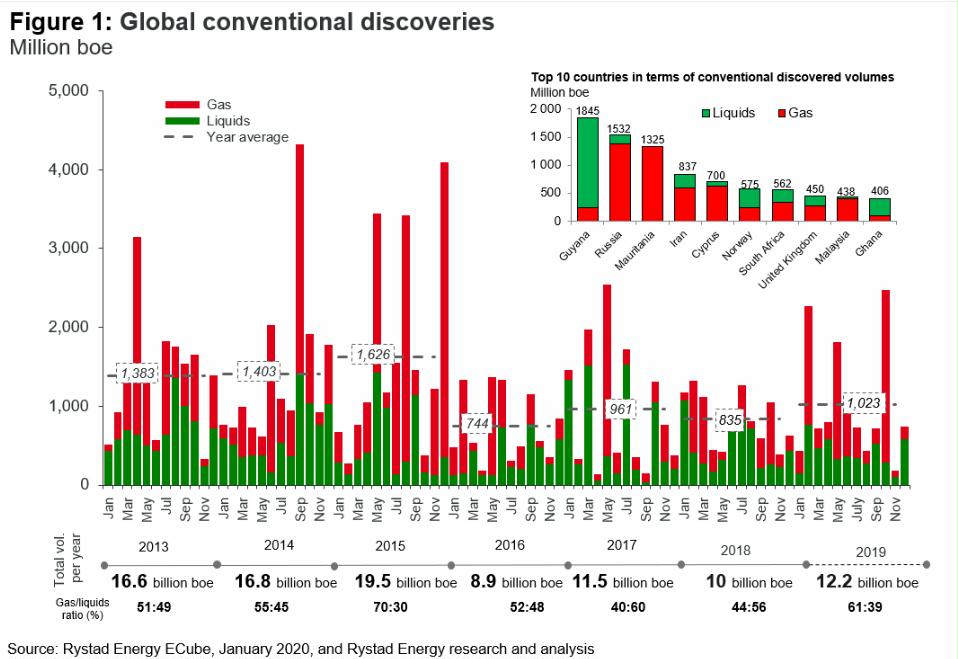

The best CSP is in just a few unpopulated, drought-stricken states (AZ, CA, NM, NV)(NREL 2012):

The Seasonal Nature of sunshine (International Energy Agency. 2011. Solar Energy Perspectives)

Seasonal storage for CSP plants would require stone storage. The volume of stone storage for a 100 MW system would be no less than 2 million m3, which is the size of a moderate gravel quarry, or a silo of 250 meter diameter and 67 meter high. This may not be out of proportion, in regions where available space is abundant, as suggested by the comparison with the solar collector field required for a CSP plant producing 100 MW on annual average.

Stones are poor heat conductors, so exchange surfaces should be maximized, for example, with packed beds loosely filled with small particles. One option is then to use gases as HTFs from and to the collector fields, and from and to heat exchangers where steam would be generated. Another option would be to use gas for heat exchanges with the collectors, and have water circulating in pipes in the storage facility, where steam would be generated. This second option would simplify the general plan of the plant, but heat transfers between rocks and pressurized fluids in thick pipes may be problematic.

Annual storage may emerge as a useful option, as generation of electricity by CSP plant in winter is significantly less than in other seasons in the range of latitudes – between 15° and 35° – where suitable areas for CSP generation are found. However, skeptics point out the need for much thicker insulation walls as a critical cost factor.

Square miles needed to produce 25,000 TWh/year with CSP

CSP is more efficient than PV per surface of collectors, but less efficient per land surface, so its 25,000 TWh of yearly production would require a mirror surface of 38,610 square miles (100,000 sq km) and a land surface of about 115,831 square miles (300,000 km2).

Best locations for CSP

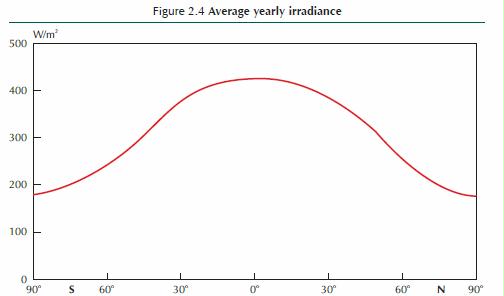

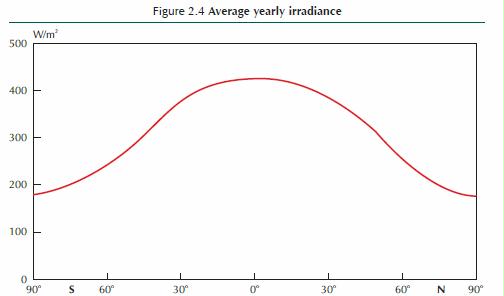

Tropical zones thus receive more radiation per surface area on yearly average than the places that are north of the Tropic of Cancer or south of the Tropic of Capricorn. Independent of atmospheric absorption, the amount of available irradiance thus declines, especially in winter, as latitudes increase. The average extraterrestrial irradiance on a horizontal plane depends on the latitude (Figure 2.4).

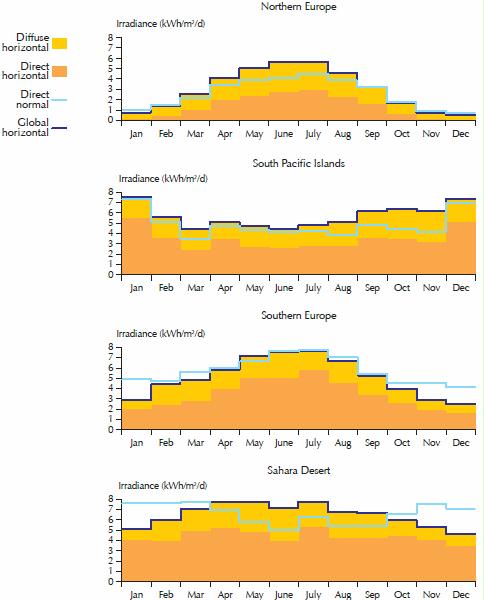

Irradiance varies over the year at diverse latitudes – very much at high latitudes, especially beyond the polar circles, and very little in the tropics (Figure 2.5). Seasonal variations are greater at higher latitudes:

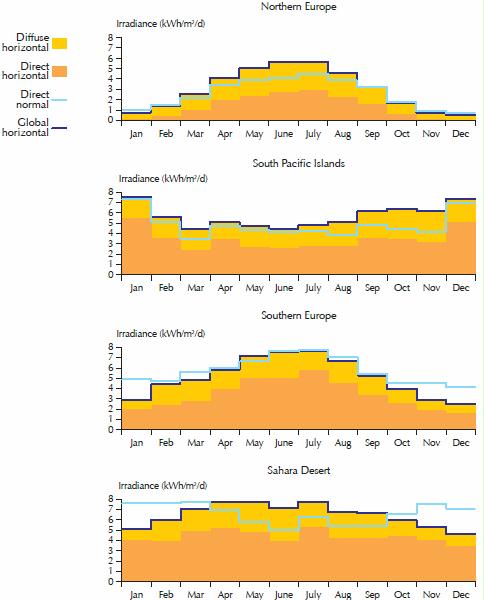

Figure 2.8 The yearly profile of mean daily solar radiation for different locations around the world. The dark area represents direct horizontal irradiance, the light area diffuse horizontal irradiance. Their sum, global horizontal irradiance (GHI) is the black line. The blue line represents direct normal irradiance (DNI). Key point: Temperate and humid equatorial regions have more diffuse than direct solar radiation.

So for solar CSP, the blue line is important and needs to be above 6 for a project to be commercially viable. The South Pacific Islands have too much moisture (blue line), and northern europe likewise plus not enough irradiance. Concentrating technologies can be deployed only where DNI largely dominates the solar radiation mix, i.e. in sunny countries where the skies are clear most of the time, over hot and arid or semi-arid regions of the globe. These are the ideal places for concentrating solar power (CSP), concentrating photovoltaics (CPV). PV can work fine in humid regions, but not CSP or CPV.

Formulations such as “a daily average of 5.5 hours of sunshine over the year” are casually used, however, to mean an average irradiance of 5.5 kWh/m2/d (2 000 kWh/m2/y), i.e. the energy that would have been received had the sun shone on average for 5.5 hours per day with an irradiance of 1,000 W/m2. In this case, one should preferably use “peak sunshine” or “peak sun hours” to avoid any confusion with the concept of sunshine duration.

Ground data measurements for 1-2 years before building a CSP plant

Ground measurements are critically necessary for a reliable assessment of the solar energy possibilities of sites, especially if the technology is CSP or CPV. Satellite data can be used to complement short ground measurement periods of one or two years with a longer term perspective. Ten years is the minimum necessary to have a real perspective on annual variability, and to get a sense of the actual average potential and the possible natural deviations from year to year. Satellite data should be used only when they have been bench-marked by ground measurements.

All parabolic trough plants currently in commercial operation rely on a synthetic oil as heattransfer fluid (HTF) from collector pipes to heat exchangers, where water is preheated, evaporated and then superheated. The superheated steam runs a turbine, which drives a generator to produce electricity. After being cooled and condensed, the water returns to the heat exchangers. Parabolic troughs are the most mature of the CSP technologies and form the bulk of current commercial plants. Investments and operating costs have been dramatically reduced, and performance improved, since the first plants were built in the 1980s. For example, special trucks have been developed to facilitate the regular cleaning of the mirrors, which is necessary to keep performance high, using car-wash technology to save water.

Most first-generation plants have little or no thermal storage and rely on combustible fuel as a firm capacity back-up. CSP plants in Spain derive 12% to 15% of their annual electricity generation from burning natural gas. More than 60% of the Spanish plants already built or under construction, however, have significant thermal storage capacities, based on two-tank molten-salt systems, with a difference of temperatures between the hot tank and the cold one of about 100°C.

Salt mixtures usually solidify below 238°C and are kept above 290°C for better viscosity, however, so work is needed to reduce the pumping and heating expenses required to protect the field against solidifying [my comment: so fossil energy to keep the salts hot subtracts from efficiency]

Energy storage

Worldwide energy storage: The volume of electricity storage necessary to make the electricity available when needed would likely be somewhere between 25 TWh and 150 TWh – i.e. from 10 to 60 hours of storage. If 20 TWh are transferred from one hour to another every day, then the yearly amount of variable renewable electricity shifted daily would be roughly 7,300 TWh. Allowing for 20% losses, one may consider 9,125 TWh in and 7,300 TWh out per year.

Studies examining storage requirements of full renewable electricity generation in the future have arrived at estimates of hundreds of GW for Europe (Heide, 2010), and more than 1,000 GW for the United States (Fthenakis et al., 2009). Scaling-up such numbers to the world as a whole (except for the areas where STE/CSP suffices to provide dispatchable generation) would probably suggest the need for close to 5,000 GW to 6,000 GW storage capacities. Allowing for 3,000 GW gas plants of small capacity factor (i.e. operating only 1 000 hours per year) explains the large difference from the 2,500 GW of storage capacity needs estimated above. However, one must consider the role that large-scale electric transportation could possibly play in dampening variability before considering options for large-scale electricity storage.

V2G possibilities certainly need to be further explored. They do entail costs, however, as battery lifetimes depend on the number, speeds and depths of charges and discharges, although to different extents with different battery technologies. Car owners or battery-leasing companies will not offer V2G free to grid operators, not least because it reduces the lifetime of batteries. Electric batteries are about one order of magnitude more expensive than other options available for large-scale storage, such as pumped-hydro power and compressed air electricity storage.

IEA 2014. Technology Roadmap. Solar Thermal Electricity. International Energy Agency

Global horizontal irradiance (GHI) is a measure of the density of the available solar resource per unit area on a plane horizontal to the earth’s surface. Global normal irradiance (GNI) and direct normal irradiance (DNI) are measured on surfaces “normal” (i.e., perpendicular) to the direct sunbeam. GNI is relevant for two-axis, sun-tracking, “1-sun” (i.e., non-concentrating) PV devices.

DNI is the only relevant metric for devices that use lenses or mirrors to concentrate the sun’s rays on smaller receiving surfaces, whether concentrating photovoltaics (CPV) or CSP generating STE. All places on earth receive 4,380 daylight hours per year — i.e., half the total duration of a year – but different areas receive different yearly average amounts of energy from the sun.

When the sun is lower in the sky, its energy is spread over a larger area and energy is also lost when passing through the atmosphere, because of increased air mass; the solar energy received is therefore lower per unit horizontal surface area.

Inter-tropical areas should thus receive more radiation per land area on a yearly average than places north of the Tropic of Cancer or south of the Tropic of Capricorn.

However, atmospheric absorption characteristics affect the amount of this surface radiation significantly. In humid equatorial places, the atmosphere scatters the sun’s rays. DNI is much more affected by clouds and aerosols than global irradiance. The quality of DNI is more important for CSP plants than for concentrated photovoltaics (CPV), because the thermal losses of a CSP plant’s receiver and the parasitic consumption of the electric auxiliaries are essentially constant, regardless of the incoming solar flux. Below a certain level of daily DNI, the net output is null (Figure 2 above).

High DNI is found in hot and dry regions with reliably clear skies and low aerosol optical depths, which are typically in subtropical latitudes from 15° to 40° north or south. Closer to the equator, the atmosphere is usually too cloudy, especially during the rainy season. At higher latitudes, weather patterns also produce frequent cloudy conditions, and the sun’s rays must pass through more atmosphere mass to reach the power plant. DNI is also significantly higher at higher elevations, where absorption and scattering of sunlight due to aerosols can be much lower. Thus, the most favorable areas for CSP resource are in North Africa, southern Africa, the Middle East, north- western India, the south-western United States, northern Mexico, Peru, Chile, the western parts of China and Australia. Other areas that are suitable include the extreme south of Europe and Turkey, other southern US locations, central Asian countries, places in Brazil and Argentina, and some other parts of China.

Areas with sufficient direct irradiance for CSP development are usually arid and many lack water for condenser cooling (Box 1). Dry-cooling technologies for steam turbines are commercially available, so water scarcity is not an insurmountable barrier, but it leads to an efficiency penalty and an additional cost. Wet-dry hybrid cooling can significantly improve performance, with water consumption limited to heat waves.

Almost all existing CSP plants use some fossil fuel as back-up, to remain dispatchable even when the solar resource is low and to guarantee an alternative thermal source that can compensate night thermal losses, prevent freezing and assure a faster start-up in the early morning.

Investment costs for CSP plants have remained high, from USD 4 000/kW to 9 000/kW, depending on the solar resource and the capacity factor, which also depends on the size of the storage system and the size of the solar field, as reflected by the solar multiple.

Costs were expected to decrease as CSP deployment progressed, following a learning rate of 10% (i.e., 10% cost reduction for each cumulative capacity doubling). This decrease has taken a long time to materialize, however, because market opportunities for CSP plants have diminished and the cost of materials has increased, particularly in the most mature parts of the plants, the power block and balance of plant (BOP). Other causes are the dominance of a single technology (trough plants with oil as heat transfer fluid

The few larger plants that have been or are being built elsewhere are either the first of their find in the world, with large development costs and technology risks (e.g., in the United States),

Levelized cost of electricity (LCOE)3 of STE varies widely with the location, technology, design and intended use of plants. The location determines the quantity and quality of the solar resource (Box 1), atmospheric attenuation at ground level, variations in temperature that affect efficiency (e.g., cold at night increases self-consumption, warmth during daylight reduces heat losses but also thermodynamic cycle efficiency) and the availability of cooling water. A plant designed for peak or mid-peak generation with a large turbine for a relatively small solar field will generate electricity at a higher cost than a plant designed for base load generation with a large solar field for a relatively small turbine. LCOE, while providing useful information, does not represent the entire economic balance of a CSP plant, which depends on the value of the generated STE.

Recent CSP plant in the United States secured PPA at USD 135/MWh, but taking investment tax credit into account, the actual remuneration is about USD 190/MWh. The US DoE’s Sunshot program expects more rapid cost reductions based on current trends, and even aims for LCOE of USD 60/MWh as soon as 2020 [dream on…]

Barriers encountered, overcome or outstanding

Developers have encountered several barriers to establishing CSP plants. These include insufficiently accurate DNI data; inaccurate environmental data; policy uncertainty; difficulties in securing land, water and connections; permitting issues; and expensive financing, leading to difficult financial closure Inaccurate DNI data can lead to significant design errors. Ground-level atmospheric turbidity, dirt, sand storms and other weather characteristics or events may seriously interfere with CSP technologies. Permits for plants have been challenged in courts because of concerns about their effects on wildlife, biodiversity and water use. Some countries prohibit the large-scale use as HTF of synthetic oil or some molten salts, or both.

The most significant barrier is the large up-front investment required. The most mature technology, PT with oil as HTF, with over 200 cumulative years of running, may have limited room for further cost reductions, as the maximum temperature of the HTF limits the possible increase in efficiency and imposes high costs to thermal storage systems. Other technologies offer greater prospects for cost reductions but are less mature and therefore more difficult to obtain finance for. In countries with no or little experience of the technology, financing circles fear risks specific to each country.

In the United States, the loan guarantee program of the DoE has played a key role in overcoming financing difficulties and facilitating technology innovation.

Medium-term outlook

There are no new CSP projects in Spain, as incentives have been cut.

Plants in the approval process or ready to start construction represent 20 MW in France and 115 MW in Italy, while other projects are under development. The Italian environment legislation does not allow for extensive use of oil in trough plants, limiting the technology options to more innovative designs, such as DSG or molten salts as HTF. Projects that would produce several gigawatts are still under consideration or development in the United States, although not all will succeed in obtaining the required permits, PPAs, connections, and financing.

Current average LCOE is high because most existing plants have been built in Spain, which has relatively weak DNI. [my comment: if there is money for energy projects it’s spent regardless of how expensive and foolish – look at all the fracked natural gas by companies deeply in debt, the massive building of solar PV and CSP in Spain, ethanol subsidies, and all kinds of wasteful projects (and research) across the board. I think this is why there’s no funding for EROI research — nobody wants to know! Plus foolish projects provide jobs, it’s more important for democrats to provide “green” jobs than whether or not it’s a good idea. And why not, as long as there is oil we can build cities like Las Vegas in the desert that will be abandoned as soon as 2024 or whenever Lake Mead dries up, parking lots, cheap ugly housing projects, and so on]

As deployment intensifies in the southwestern United States and spreads to North Africa, South Africa, Chile, Australia and the Middle East, better resources will be used, improving performance.

Table 4: Projections of LCOE for new-built CSP plants with storage in the hi-Ren Scenario

The possible role of small-scale CSP devices – from 100 kW to a few MW – off-grid or serving in mini-grids, has not been included in the ETP model. There is too little industrial experience of such systems to make informed cost assumptions, whether the systems are based on PT, LFR, parabolic dishes, Scheffler dishes or small towers, using organic Rankin cycle turbines, micro gas-turbines or various reciprocating engines. If they allow thermal storage4 or fuel backup, small-scale CSP systems have to compete against PV with battery storage or fuel backup. They may find a role, although the fact that CSP technology seems to benefit more than PV from economies of scale suggests that smallscale CSP systems may face a greater competitive challenge than large-scale ones. Finding local skills for maintenance may also be challenging in remote, off-grid areas.

Storage is a particular challenge in CSP plants that use DSG. Because water evaporation is isothermal, unlike sensible heat addition or removal in the salt, a round-trip storage cycle would result in severe steam temperature and pressure drops, thereby destroying the efficiency of the thermodynamic cycle in discharge mode. Storing latent heat of saturated steam in pressurised vessels is expensive and provides no scale effect on cost. One option would use three-stage storage devices that preheat the water, evaporate the water and superheat the steam. Stages 1 and 3 would be sensible heat storage, in which the temperature of the storage medium changes. Stage 2 would best be latent heat storage, in which the state of the storage medium changes, using some phase change material. Another option could be to use liquid phase-change materials. The growing relevance of thermal storage in the context of intense competition from cheap PV favors using molten salts as both the heat transfer fluid and the storage medium (termed “direct storage”). If DSG spares heat exchangers for steam generation, the use of molten salts as HTF spares heat exchangers for storage. Salts are less costly than oil. Using salts allows raising the temperature and pressure of the steam, from 380°C to 530-550°C and from 10 to 12-15 megapascals (MPa) in comparison with oil as HTF, increasing the efficiency of the power block from 39% to 44-45% (Lenzen, 2014). Thanks to higher temperature differences between hot and cold salts (currently used salt mixtures usually solidify below 238°C), plants using molten salts as HTF need three times less salts than trough plants using oil as HTF, for the same storage capacity. This lowers the storage system costs, which represent about 12% of the overall plant cost for seven-hour storage of a trough plant. Also, the “return efficiency” of thermal storage, at about 93% with indirect storage (in which heat exchangers reduce the working temperature), is increased to 98% with direct storage. Finally, another advantage of molten salts as HTF over steam is that heat transfer can be carried out at low pressure with thin-wall solar receivers, which are cheaper and more effective. Overall, the substitution of molten salts for oil in CSP would allow for 30% LCOE reduction, according to Schott, the lead manufacturer of solar receiver tubes (Lenzen, 2014). Several companies are developing the use of molten salts as HTF in linear systems, and have built or are building experimental or demonstration devices. One challenge is to reduce the expense required to keep the salts warm enough (usually above 290°C) for better viscosity in long tubes at all times and protect the field against freezing.

Apart from the fundamental choice between DSG and molten salts for HTF, towers currently also offer a great diversity of designs – and present various trade-offs. The first relates to the size (and number) of heliostats that reflect the sunlight onto the receivers atop the tower. Heliostats vary greatly in size, from about 1 m2 to 160 m2. The small ones can be flat and offer little surface to winds. The larger ones need several mirrors that are curved to send a focused image of the sun to the central receiver, and need strong support structures and motors to resist winds. For similar collected energy ranges, however, small heliostats need to be grouped by the thousand, multiplying the number of motors and connections. Manufacturers and experts still have divided views about the optimum size. Heliostats need to be distanced from one another to reduce losses arising when a heliostat intercepts part of the flux received (“shading”) or reflected (“blocking”) by another. While linear systems require flat land areas, central receiver systems may accommodate some slope, or even benefit from it as it could reduce blocking and shadowing, and allow increasing heliostat density. Algorithmic field optimization may help reduce environmental impacts and required ground leveling work while maximizing output (Gilon, 2014).

In low latitudes heliostat fields tend to be circular and surround the central receiver, while in higher latitudes they tend to be more concentrated to the polar side of the tower. Larger fields tend to be more circular to limit the maximum receiver heliostat distance and minimise atmospheric attenuation.

Proper aiming strategy must be ensured by the heliostat field’s control system in order to optimise the solar flux map on the receiver, thereby allowing the highest solar input while avoiding any local overheating of the receiver tubes. This is more difficult with DSG receivers. The heat flux on the different types of solar panel of a DSG receiver differs significantly: superheater panels (poorly cooled by superheated steam) receive a much lower flux than evaporator and preheater panels. Another important design choice relates to the number of towers for one turbine. Heliostats that are in the last rows far from the tower need to be very precisely pointed towards it, and lose efficiency as the light must make a long trip near ground level. They also have greater geometrical (“cosine”) optical losses.

At over 1 million m2, the solar field associated with the 110 MW tower built by SolarReserve with 10-hour storage at Crescent Dunes, (Nevada, United States) is perhaps close to the maximum efficient size.

The additional costs of building several towers may be made up for by the greater optical and thermal efficiencies of multitower design (Wieghardt et al., 2014). However, the optimal field size and number of towers may depend on the atmospheric turbidity of the site considered, which varies greatly among areas suitable for CSP plants. The Californian company eSolar proposes 100 MW molten salt power plants based on 14 solar fields and 14 receivers on top of monopole towers (similar to current large wind turbine masts) for one central dry-cooled power block with 13-hour thermal storage and 75% capacity factor (Tyner, 2013).

As the share of variable energy increases, base load plants, even if technically flexible (which all are not) will become less economically efficient as their utilization rate diminishes. At the same time, more peaking and mid-merit plants become necessary. Below a certain load factor – about 2,000 full load hours – open-cycle gas turbines become a better economic choice than combined-cycle plants, but they are less energy-efficient as they generate large amounts of waste heat.

Open-cycle gas turbines could be integrated with a CSP plant with storage, however, of which the steam turbine is not being used with a very high capacity factor. When the sun does not shine, the otherwise wasted heat could be collected to a large extent in the hot tank of a two-tank molten-salt system. This energy could afterwards be directed to the steam turbine to deliver electricity whenever requested. If more power is needed when the sun shines sufficiently to run the steam turbine by itself, the heat from the gas turbine could be directed to the thermal storage. In both cases, a large part of the waste heat will be used. This concept differs from the existing ISCC in which solar only provides a complement, as the presence of thermal storage allows for a complete reversal of the proportion of solar and gas, which remains a backup, though a more efficient one (Crespo, 2014). The Hysol project, funded by the European Union’s Seventh Program for research, technological development and demonstration, aims to demonstrate the viability of the concept. Similarly, in areas with both high wind penetration and CSP plants, some thermal storage, which is equipped with electric heaters for security reasons, could be used in winter to reduce curtailment from excess wind power.

Molten salts decompose at higher temperatures, while corrosion limits the temperatures of steam turbines. Higher temperatures and efficiencies could rest on the use of fluoride liquid salts as HTFs up to temperatures of 700°C to 850°C,

There are a number of potential pathways to solar fuels. The straightforward thermolysis of water is the most difficult, as it requires temperatures above 2 200°C and may produce an explosive mixture of hydrogen and oxygen. The division of the single-step water-splitting reaction into a number of sub- reactions opens up the field of so called thermochemical cycles for H2 production. The necessary reaction temperature can be decreased even below 1 000°C, resulting in intermediate solid products like metals (e.g., aluminium, magnesium, or zinc), metal oxides, metal halides or sulphur oxides. The different reaction steps can be separated in time and place, offering possibilities for long-term storage of the solids and their use in transportation. These thermochemical cycles are also able to split CO2 into CO and oxygen. If mixtures of water and CO2 are used, even synthesis gas (mainly H2 and CO) can be produced, which can be further processed to synfuels, for example by the Fischer-Tropsch process.

Concentrated solar radiation can also be used to upgrade carbonaceous materials. The most developed process is the steam reforming of methane to produce synthesis gas. Sources are either natural gas or biogas. Methane can also be cracked into hydrogen and carbon, thus producing a gaseous and a solid product. However, the required process temperature is extremely high and a homogeneous carbon product is unlikely to be produced because of the intermittent solar radiation conditions. Additionally, there is a discrepancy between the huge demand for hydrogen and the low demand for high-value carbon, such as carbon black or advanced carbon nano-tubes.

Hydrogen produced in concentrating solar chemical plants could be blended with natural gas and thus used in today’s energy system. Town gas, which prevailed before natural gas spread out, included hydrogen up to 60% in volume or about 20% in energy content. This blend could be used for various purposes in industry, households and transportation, reducing emissions of CO2 and nitrous oxides. Gas turbines in integrated gasification combined cycle (IGCC) power plants can burn a mix of gases with 90% hydrogen in volume. Many existing pipelines could, with some adaptation, transport such a blend from sunny places to large consumption centres (e.g. from North Africa to Europe).

Solar-produced hydrogen could also find niche markets today in replacing hydrogen production from steam-reforming of natural gas in its current uses, such as manufacturing fertilizers and removing sulfur from petroleum products. Regenerating hydrogen with heat from concentrated sunlight to decompose hydrogen sulphide into hydrogen and sulfur could save significant amounts of still gas in refineries for other purposes. Coal could be used together with methane gas as feedstock, and deliver dimethyl ether (DME), after solar-assisted steam reforming of natural gas, coal gasification under oxygen, and two-step water splitting. DME could be used as a liquid fuel, and its combustion would entail similar CO2 emissions to those from burning conventional petroleum products, but significantly less than the life-cycle emissions of other coal-to-liquid fuels.

Besides solar fuels, CSP technology could find a great variety of uses in providing high temperature process heat or steam, such as for enhanced oil recovery, and mining applications (where CSP is already in use), smelting of aluminium and other metals, and in industries such as food and beverages, textiles and pharmaceuticals. Various forms of cogeneration with STE can also be considered. For example, sugar plants require high temperature steam in spring, when the solar resource is maximal but electricity demand minimal. Solar fields providing steam for sugar plants could run a turbine and generate STE for the rest of the year.

STE is not broadly competitive today, and will not become so until it benefits from strong and stable frameworks, and appropriate support to minimise investors’ risks and reduce capital costs.

As with any large industrial projects, STE projects require several permissions, often delivered by many different government jurisdictions at various geographical levels, as well as many branches or agencies of each – local, regional, state, federal or national. Each may protect different interests, all of them legitimate.

Future values of PV and STE in California Researchers at the National Laboratory of Renewable Energy (NREL) in the United States have studied the future total values (operational value plus capacity value) of STE with storage and PV plants in California in two scenarios: one with 33% renewables in the mix (the renewable portfolio standard by end 2020), including about 11% PV, another with 40% renewables (under consideration by California’s governor), including about 14% PV. In both cases there is over 1 GW of electricity storage available on the grid. The main results indicate that at 33% renewable penetration, the bulk of the gap in favour of STE comes from its greater capacity value, which avoids the costs of building additional thermal generators to meet demand (Table 5). At 40% renewable penetration, the value of STE increases slightly, but the value of PV drops significantly, mostly reflecting the drop of its own capacity value (Jorgenson et al., 2014). For investment decisions and planning, system values are as much important as LCOE. Table 6: Total value in two scenarios of renewables penetration in California Value component 33% renewables 40% renewables STE with storage PV Value value (USD/MWh) (USD/MWh) STE with

The built-in storage capability of CSP is cheaper and more effective (with over 95% return efficiency, versus about 80% for most competing technologies) than battery storage and pumped-hydropower storage. Thermal storage allows separating the collection of the heat (during the day) and the generation of electricity (at will). This capability has immediate value in countries having significant increase in power demand when the sun sets, in part driven by lighting requirements. In many such countries, the electricity mix, which during daytime is often dominated by coal, becomes dominated by peaking technologies, often based on natural gas or oil products.

The greatest possible expansion of PV, which implies its dominance over all other sources during a significant part of the day, creates difficult technical and economic challenges to low-carbon base-load technologies such as nuclear power and fossil fuel with CCS. Natural gas is more suited to daily “stop-and-go” with rapid ramps up and down, and is more economical for mid-merit operations (between about 2,000 and 4,000 full-load hours).

changes in the rules applicable to investments already being made or in process can have long-lasting deterrent effects on investments if they significantly modify the prospects for economic returns. This is precisely what has happened over the last few years in Spain, where a series of measures aimed at reducing the return on investment on existing CSP plants. The high risk of losing investors’ confidence may have been deemed acceptable, as these measures followed the decision to stop CSP deployment. However it may have detrimental effects for future investments in CSP plants; for other investments in the energy sector; for other investments in any other sector that requires government involvement; and for investments in other countries

Financing CSP plants, like most renewable energy plants, are very capital-intensive, requiring large upfront expenditures. Financing is thus difficult, especially in new, immature markets, and for new, emerging sub-technologies. In the United States, some private investors have large amounts of money available and might be willing to invest in clean energy for a variety of reasons; but even in this context the risks may have appeared too high for large, innovative CSP projects – costing around USD 1 billion – to materialize, without the loan guarantee program of the US DoE. This program has been essential to the renaissance of CSP in the United States, in allowing projects to access debt at very low cost from a US government bank and facilitating financial closure at acceptable WACC of large projects.

In other countries, such as India, Morocco and South Africa, public low-cost lending has been essential for jump-starting the deployment of CSP. In India and South Africa, private banks would have not provided capital for the very long maturity involved. In Morocco, the presence of a government agency as equity partner significantly reduced the perception of policy risks among other partners. In Morocco and South Africa, international finance institutions provided concessional grants that reduced the overall costs of large CSP projects.

Subsidizing renewable energy projects through long-term and/or low-cost debt-related policies could reduce the total subsidies compared with per-kWh support. However, this transfers the burden of high capital-intensivity to governments, which may not have enough money at hand, and this carries a risk of slowing deployment. Interest subsidies and/or accelerated depreciation have much higher one-year budget efficiency.

Research is under way to test and evaluate methods of measuring DNI accurately using lower-cost instrumentation, and for producing long-term, high-quality DNI data sets by merging long-term, satellite-derived data of moderate accuracy with high-quality, highly accurate ground-based measurements that may only cover a year or less. This research also includes important studies on sunshape and circumsolar radiation, and how these factor into both DNI measurements and STE system performance. In addition, satellite-based methods for estimating DNI are constantly improving and represent a reliable and viable way of choosing the best sites for STE plants. Furthermore, the ability to accurately forecast DNI levels – from a few hours ahead to a few days ahead – is constantly improving, and will be an important tool for utilities operating STE systems.

Abbreviations: ARRA American recovery and reinvestment Act CCS carbon capture and storage CO2 carbon dioxide CPI Climate Policy Initiative CSF concentrated solar fuels CSP concentrating solar power CPV concentrating photovoltaic CRS central receiver system CTF Clean Technology Fund DC direct current DII Desertec Industry Initiative DLR Forschungszentrum der Bundesrepublik Deutschland für Luft- und Raumfahrt (German Aerospace Centre) DME Dimethyl ether DNI direct normal irradiance DSG direct steam generation EDF Électricité de France EIB European Investment Bank EPC engineering, procurement and construction ETP: Energy Technology Perspectives EU European Union EUR euro FiT feed-in tariff FiP feed-in premium G8 Group of Eight GHG greenhouse gas(es) GHI global horizontal irradiance GNI global normal irradiance Gt gigatonnes GW gigawatt (1 million kW) GWh gigawatt hour (1 million kWh) Hi-Ren high renewables (Scenario) HTF heat transfer fluid HVDC high- voltage direct current IA implementing agreement IEA International Energy Agency IFI international financial institution IGCC integrated gasification combined cycle IRENA International Renewable Energy Agency ISCC Integrated Solar Combined-Cycle (plant) JRC Joint Research Centre kW kilowatt kWh kilowatt hour LCOE levelized cost of electricity LFR linear Fresnel reflectors MW megawatt (1 thousand kW) MWe megawatt electrical MWh megawatt hour (1 thousand kWh) MWth megawatt thermal NGO non-governmental organisation NREAP national renewable energy action plan NREL National Renewable Energy Laboratory (United States) OECD Organization for Economic Co-operation and Development O&M operation and maintenance PPA power purchase agreement PT parabolic trough TWh terawatthour (1 billion KWh)

IEA (2014a), Technology Roadmap: Solar Photovoltaic Energy, 2014 Edition, OECD/IEA, Paris. IEA (2014b), Energy Technology Perspectives 2014, OECD/IEA, Paris. IEA (2014c), Technology Roadmap: Energy Storage, OECD/IEA, Paris. IEA (2014d), Medium-Term Renewable Energy Market Report, OECD/IEA, Paris. IEA (2014e), The Power of Transformation: Wind, Sun and the Economics of Flexible Power Systems, OECD/ IEA, Paris. IEA (2011), Solar Energy Perspectives, Renewable Energy Technologies, OECD/IEA, Paris. IEA (2010), TechnologyRoadmap: Concentrating Solar Power, OECD/IEA, Paris.

Jorgenson, J., P. Denholm and M. Mehos (2014), Estimating the Value of Utility-Scale Solar Technologies in California under a 40% Renewable Portfolio Standard, NREL/TP-6A20-61695, May.

RED electrica de España (REE) (2014), The Spanish Electricity System – Preliminary Report 2013, RED, Madrid, Spain, http://www.ree.es/sites/default/files/downloadable/preliminary_report_2013.pdf.

REFERENCES

Deign, J. 2020. America’s Concentrated Solar Power Companies Have All but Disappeared. greentechmedia.com

DOE/NETL. August 28, 2012. Role of Alternative Energy Sources: Solar Thermal Technology Assessment. Department of Energy, National Energy Technology Laboratory.

Martin, C., et al. 2020. A $1 Billion Solar Plant Was Obsolete Before It Ever Went Online. SolarReserve’s Crescent Dunes received backing from Citigroup and the Obama Energy Department but couldn’t keep pace with technological advances. Bloomberg.

NREL. 2011a. Solar Radiation Data Manual for Flat Plate and Concentrating Collectors. National Renewable Energy Laboratory.

NREL. 2011b. U.S. Solar Radiation Resource Maps: Atlas for the Solar Radiation Data Manual for Flat Plate and Concentrating Collectors. National Renewable Energy Laboratory.

Maps: http://www.nrel.gov/gis/solar.html

NREL. 2012. Concentrating solar resource of the united states. National Renewable Energy Laboratory.

![Concentrating Solar Power Average Daily Solar Radiation Per Month, 1961]1990 (NREL 2011b)](https://energyskeptic.com/wp-content/uploads/2015/03/CSP-seasonal-6-USA-maps-jan-mar-may-jul-sep-nov.jpg)

Preface. Since 90% of international goods move by ships, I was curious about how much fuel they burned. It’s a lot: The very large container ship CMA CGM Benjamin Franklin above, which can carry 18,000 20-foot containers, carries approximately 4.5 million gallons of fuel oil, which takes up 16,000 cubic meters (FW 2020). As much fuel as 300,000 15-gallon tank cars.

Preface. Since 90% of international goods move by ships, I was curious about how much fuel they burned. It’s a lot: The very large container ship CMA CGM Benjamin Franklin above, which can carry 18,000 20-foot containers, carries approximately 4.5 million gallons of fuel oil, which takes up 16,000 cubic meters (FW 2020). As much fuel as 300,000 15-gallon tank cars.