Preface. One of the huge hurdles to shifting from oil to “something else” is the chicken-or-egg problem of no one buying a new-fuel vehicle with few places to get it, so few are made, so service stations don’t add the new fuel since there are few customers.

This is just one piece of the distribution system, it’s also a problem that ethanol can’t flow in oil or gas pipelines because it corrodes them, and has to be transported by truck or rail using diesel fuel (since trucks can’t burn ethanol or diesohol).

This is why it is hard for service stations to add E15, E85, hydrogen, or any fuel for that matter, though of course each has its own unique costs and difficulties. Go here to see where alternative fuels can be found by state.

And heaven forbid you put in the wrong fuel. Gasoline cars can not burn diesel fuel, it could lead to needing an engine rebuild. At best the car chugs and lurches and is towed, then billed up to $1500 to flush the tank, fuel lines, injectors, and fuel pump.

Alice Friedemann www.energyskeptic.com author of “When Trucks Stop Running: Energy and the Future of Transportation”, 2015, Springer, Barriers to Making Algal Biofuels, and “Crunch! Whole Grain Artisan Chips and Crackers”. Podcasts: Collapse Chronicles, Derrick Jensen, Practical Prepping, KunstlerCast 253, KunstlerCast278, Peak Prosperity , XX2 report

Mr. Shane Karr, Vice President of Federal Government Affairs, the Alliance of Automobile Manufacturers

only about 2% of gas stations have an E85 pump, and most are concentrated in the Midwest, where most com ethanol is produced. This makes sense, because keeping production close to point-of-sale is the most affordable approach. But even in states where E85 pumps are concentrated, actual sale of E85 has been low and stagnant. For example, in 2009 Minnesota had 351 stations with an E85 pump (the most of any state) but the average Flexible fuel vehicle (FFV) in the state used just 10.3 gallons of E85 for the whole year.

Achieving vehicle production mandates in H.R. 1687 by producing E85 FFVs would cost consumers well more than $1 billion per year by the most conservative estimates. And these conservative estimates are severely understated for the vehicle mandates of the bill for two reasons: (I) H.R. 1687 requires a new kind of tri-fuel FFV that can run on gasoline, ethanol, methanol, and any combination of the 3 fuels, and which does not exist today; and (2) it will be more expensive to produce tri-fuel FFVs that can comply with H.R. 1687, especially with the forthcoming California Low Emission Vehicles (LEV III) and federal Tier 3 emissions standards along with very aggressive fuel economy/GHG emission requirements through 2025.

Serial No. 112–159. July 10, 2012. The American energy initiative part 23: A focus on Alternative Fuels and vehicles. House of Representatives. 210 pages.

Jeffrey Miller, President of Miller Oil Company, Norfolk, VA.

On behalf of the National Association of Convenience Stores (NACS) Before the House Energy and Commerce Committee, Subcommittee on Energy and Power May 5, 2011 Hearing on “The American Energy Initiative”

My name is Jeff Miller, President of Miller Oil Company headquartered in Norfolk, VA. As of December 31, 2010, the U.S. convenience and fuel retailing industry operated 146,341 stores of which 117,297 (80.2%) sold motor fuels. In 2009, our industry generated $511 billion in sales (one of every 28 dollars spent in the United States), employed more than 1.5 million workers and sold approximately 80% of the nation’s motor fuel.

To fully understand how fuels enter the market and are sold to consumers, it is important to know who is making the decision at the retail level of trade. Our industry is dominated by small businesses. In fact, of the 117,297 convenience stores that sell fuel, 57.5% of them are single-store companies – true mom and pop operations. Overall, nearly 75% of all stores are owned and operated by companies my size or smaller – and we all started with just a couple of stores.

Many of these companies – mine included – sell fuel under the brand name of their fuel supplier. This has created a common misperception in the minds of many policymakers and consumers that the large integrated oil companies own these stations. The reality is that the majors are leaving the retail market place and today own and operate fewer than 2% of the retail locations.

Taking a chance by offering a new candy bar is very different from switching my fueling infrastructure to accommodate a new fuel. So when a new fuel product becomes available, our decision to offer it to our customers takes more time. We need to know that our customers want to buy it, that we can generate enough return to justify the investment, and that we can sell the fuel legally. These are the fundamental issues that face the introduction of new renewable and alternative fuels.

Today, most of the fuel sold in the United States is blended with 10% ethanol. The transition to this fuel mix was not complicated, but it was not without challenges. When ethanol became more prevalent in my market, we realized what a powerful solvent it is. Ethanol forced us to clean our storage tanks and change our filters frequently to avoid introducing contaminants into the fuel tanks of our customers’ vehicles. Despite our best efforts, however, there were times when the fuel a customer purchased caused problems with their vehicles. In those situations, it was our responsibility to correct the damage. And while the transition to E10 required no significant changes to equipment or systems, it taught us some lessons that influence our decisions concerning new fuels.

Retailers are now hearing reports from Washington that the use of fuel containing 15% ethanol is authorized.

Currently, there is essentially only one organization that certifies our equipment – Underwriters Laboratories (UL). UL establishes specifications for safety and compatibility and runs tests on equipment submitted by manufacturers for UL listing. Once satisfied, UL lists the equipment as meeting a certain standard for a certain fuel.

Prior to last spring, however, UL had not listed a single motor fuel dispenser (a.k.a, pump) as compatible with any fuel containing more than 10% ethanol. This means that any dispenser in the market prior to last spring – which would represent the vast majority of my dispensers – is not legally permitted to sell E15, E85 or anything above 10% ethanol – even if it is technically able to do so safely.

If I use non-listed equipment, I am in violation of OSHA regulations and may be violating my tank insurance policies, state tank fund program requirements, bank loan covenants, and potentially other local regulations. Furthermore, if my store has a petroleum release from that equipment, I could be sued on the grounds of negligence for using non-listed equipment, which would cost me significantly more than the expense of cleaning up the spill.

So, if none of my dispensers are UL-listed for E15, what are my options?

Unfortunately, UL will not re-certify any equipment. Only those units manufactured after UL certification is issued are so certified – all previously manufactured devices, even if they are the same model, are subject only to the UL listing available at the time of manufacture. This means that no retail dispensers, except those produced after UL issued a listing last spring, are legally approved for E10+ fuels.

In other words, the only legal option for me to sell E15 is to replace my dispensers with the specific models listed by UL. On average, a retail motor fuel dispenser costs approximately $20,000.

It is less clear how many of my underground storage tanks and associated pipes and lines would require replacement. Many of these units are manufactured to be compatible with high concentrations of ethanol, but they may not be listed as such. In addition, the gaskets and seals may need to be replaced to ensure the system does not pose a threat to the environment. If I have to crack open concrete to replace seals, gaskets or tanks, my costs can escalate rapidly and can easily exceed $100,000 per location.

MISFUELING

The second major issue I must consider is the effect of the fuel on customer engines and vehicles. Having dealt with engine problems associated with fuel contamination following the introduction of E10, I am very concerned about the potential effect a fuel like E15 would have on vehicles. The EPA decision concerning E15 is very challenging. Under EPA’s partial waiver, only vehicles manufactured in model year 2001 or more recently are authorized to fuel with E15. Older vehicles, motorcycles, boats, and small engines are not authorized to use E15.

How am I supposed to prevent the consumer from buying the wrong fuel? I can deal with the responsibility for fuel quality and contamination control, but self-service customer misfueling is a much more difficult challenge to control.

In the past, when we have introduced new fuels – like unleaded gasoline or ultra-low sulfur diesel – they were backwards compatible; i.e. older vehicles could use the new fuel. In addition, newer vehicles were required to use the new fuel, creating a guaranteed market demand.

Such is not the case with E15 – legacy vehicles are not permitted to use the new fuel. Doing so will violate Clean Air Act standards and could cause engine performance or safety issues. Yet, there are no viable options to retroactively install physical countermeasures to prevent misfueling. Consequently, my risk of liability if a customer uses E15 in the wrong engine – whether accidentally or intentionally – is significant.

First of all, I could be fined under the Clean Air Act for misuse of the fuel – this has happened before. When lead was phased out of gasoline, unleaded fuel was more expensive than leaded fuel. To save a few cents per gallon, some consumers physically altered their vehicle fill pipes to accommodate the larger leaded nozzles either by using can openers or by using a funnel while fueling. Retailers had no ability to prevent such behavior, but the EPA often levied fines against retailers for not physically preventing the consumer from bypassing the misfueling countermeasures.

My understanding is EPA has told NACS that the agency would not be targeting retailers for consumer misfueling. But that provides me with little comfort – EPA policy can change in the absence of specific legal safeguards. Further, the Clean Air Act includes a private right of action and any citizen can file a lawsuit against a retailer who does not prevent misfueling. Whether the retailer is found guilty does not change the fact that defending against such claims can be very expensive.

Finally, I am very concerned about the effect of E15 in the wrong engine. Using the wrong fuel could void an engine’s warranty, cause engine performance problems or even compromise the safety of some equipment. A consumer may seek to hold me liable for these situations even if my company was not responsible for the misfueling. Defending my company against such claims is financially expensive, but also expensive from a customer-relations perspective.

GENERAL LIABILITY EXPOSURE

Retailers are also concerned about long-term liability exposure. Our industry has experience with being sued for selling fuels that were approved at the time but later ruled defective. What assurances are there that such a situation will not repeat itself with new fuels being approved for commerce?

For example, E15 is approved only for certain engines and its use in other engines is prohibited by the EPA due to associated emissions and performance issues. What if E15 does indeed cause problems in non-approved engines or even in approved engines? What if in the future the product is determined defective, the rules are changed and E15 is no longer approved for use in commerce? There is significant concern that such a change in the law would be retroactively applied to any who manufactured, distributed, blended or sold the product in question.

Retailers are hesitant to enter new fuel markets without some assurance that our compliance with the law today will protect us from retroactive liability should the law change in the future. It seems reasonable that law abiding citizens should not be held accountable if the law changes in the future. Congress could help overcome significant resistance to new fuels by providing assurances that market participants will only be held to account for the laws as they exist at the time and not subject to liability for violating a future law or regulation.

MARKET ACCEPTANCE

The final challenge we face is the rate at which consumers will adopt the new fuels. Assume all the other issues are resolved, I have to ask myself: Will my customers purchase the fuel? It is important to note that this is the first fuel transition in which no person is required to purchase the fuel, unlike prior transitions to unleaded gasoline and ultra-low sulfur diesel fuel.

In the situation facing E15, only a subset of the population (about 65% of vehicles) is authorized to buy it. Yet the auto industry is not fully supportive of its use in anything except flexible fuel vehicles (about 3% of vehicles). This situation could dramatically reduce consumer acceptance. The risk of misfueling and potentially alienating customers if E15 causes performance issues also is a serious concern.

With these unknowns, how can I calculate an accurate return on my investment to install E15 compatible equipment? Again, this is not like offering a new candy bar – to sell E15 I will likely have to spend significant resources.

As new fuels enter the market, their compatibility with vehicles and their performance characteristics compared to traditional gasoline will be critically important to determining consumer acceptance. In addition, the cost of entry for retailers will influence the return on investment calculations required to determine whether to invest in the new fuel.

OPTIONS

NACS believes there are options available to Congress to help the market overcome these challenges. I have referenced E15 in this testimony because it is a fuel with which we are all familiar due to its current considerations at EPA. However, E15 alone will not satisfy the renewable fuel objectives of the country. Other products must be brought to market and how they interact with the refueling infrastructure and the consumer’s vehicles should be critical considerations to Congress when deciding whether to support their development and introduction.

Regardless which fuels are introduced in the future, the following recommendations can help lower the cost of entry and provide retailers with greater regulatory and legal certainty necessary for them to offer these new fuels to consumers:

First, because UL will not retroactively certify any equipment, Congress should authorize an alternative method for certifying legacy equipment. Such a method would preserve the protections for environmental health and safety, but eliminate the need to replace all equipment simply because the certification policy of the primary testing laboratory will not re-evaluate legacy equipment. NACS was supportive of legislation introduced in the House last Congress Reps. Mike Ross (D-AR) and John Shimkus (R-IL) as H.R. 5778. This bill directed the EPA to develop guidelines for determining the compatibility of equipment with new fuels and stipulates equipment that satisfied such guidelines would thereby satisfy all laws and regulations concerning compatibility.

Second, Congress can require EPA to issue labeling regulations for fuels that are authorized for only a subset of vehicles and ensure that retailers who comply with such requirements satisfy their requirements under the Clean Air Act and protect them from violations or engine warranty claims in the event a self-service customer ignores the notifications and misfuels a non-authorized engine. H.R. 5778 also included provisions to achieve these objectives.

Third, Congress can provide market participants with regulatory and legal certainty that compliance with current applicable laws and regulations concerning the manufacture, distribution, storage and sale of new fuels will protect them from retroactive liability should the laws and regulations change at some time in the future.

Finally, Congress should evaluate the prospects for the marketing of infrastructure-compatible fuels and support the development of such fuels. These could aid compliance with the renewable fuels standard and save retailers, engine makers and consumers billions of dollars. Policymakers might consider establishing characteristics that new fuels must possess so that equipment and engines can be manufactured or retrofitted to accommodate whichever new fuel provides the greatest benefit to consumers and the economy.

If Congress takes action to lower the cost of entry and to remove the threat of unreasonable liability, more retailers may be willing to take a chance and offer a new renewable fuel. By lowering the barriers to entry, Congress will give the market an opportunity to express its will and allow retailers to offer consumers more choice. If consumers reject the new fuel, the retailer can reverse the decision without sacrificing a significant investment, but new fuels will be given a better opportunity to successfully penetrate the market.

Serial No. 112–159. July 10, 2012. The American energy initiative part 23: A focus on Alternative Fuels and vehicles. House of Representatives. 210 pages.

Jack Gerard, President and CEO of the American Petroleum Institute. Over the past 7 years, the two RFS laws passed in 2005 and in 2007 have substantially expanded the role of renewables in America. Biofuels are now in almost all gasoline. While API supports the continued appropriate use of ethanol and other renewable fuels, the RFS law has become increasingly unrealistic, unworkable, and a threat to consumers. It needs an overhaul. Most of the problems relate to the law’s volume requirements. These mandates call for blending increasing amounts of renewable fuels into gasoline and diesel. Although we are already close to blending an amount that would result in a 10 percent concentration level of ethanol in every gallon of gasoline sold in America, that which is the maximum known safe level, the volumes required will more than double over the next 10 years. The E10, or 10 percent ethanol blend that we consume today could, by virtue of RFS volume requirements, become at least an E20 blend in the future. This would present an unacceptable risk to billions of dollars in consumer investment in vehicles, a vast majority of which were designed, built, and warranted to operate on a maximum blend of E10.

It also would put at risk billions of dollars of gasoline station equipment in thousands of retail outlets across America, most owned by small independent businesses. I believe well over 60 percent of retail establishments in this area are Ma and Pa operations.

Vehicle research conducted by the Auto Oil Coordinated Research Council shows that E15 could also damage the engines of millions of cars and light trucks, estimates exceeding five million vehicles on the road today. E20 blends may have similar, if not worse, compatibility issues with engines and service station attendants.

The RFS law also requires increasing use of cellulosic ethanol, an advanced form of ethanol that can be made from a broader range of feed stocks. The problem is, you can’t buy the fuel yet because no one is making it commercially. While EPA could waive that provision, it has decided to require refiners to purchase credits for this nonexistent fuel, which will drive up costs and potentially hurt consumers. Mandating the use of fuels that do not exist is absurd on its face and is inexcusably bad public policy.

To date, E85 has faced low consumer acceptance as FFV owners use E85 less than 1% of the time. The fuel economy of an FFV operated on E85 is approximately 25-30% lower than when fueled with gasoline due to ethanol’s lower energy content. Also, less than 2% of retail gasoline stations offer E85, which has high installation costs. In 2010 and 2011, EPA approved the use of E15 for a portion of the motor vehicle fleet in order to accommodate the RFS law’s volume increases. We believe these actions were premature and unlawful, and present an unacceptable risk to billions of dollars in consumer investments in vehicles. They also put at risk billions of dollars of gasoline station pump equipment in scores of thousands of retail outlets across America, most owned by small independent businesses. E15 is a different transportation fuel, well outside the range for which the vast majority of U.S. vehicles and engines have been designed and warranted. E15 is also outside the range for which service station pumping equipment has been listed and proven to be safe and compatible and conflicts with existing worker and public safety laws outlined in OSHA and Fire Codes. EPA should not have proceeded with E15, especially before a thorough evaluation was conducted to assess the full range of short- and long-term impacts of increasing the amount of ethanol in gasoline on the environment, on engine and vehicle performance, and on consumer safety. Research on higher blends was already underway when EPA approved El5 in 2010 and 2011. In response to the passage of EISA in 2007, the oil and natural gas industry, the auto industry, and other stakeholders, including EPA and DOE, recognized in early 2008 that substantial research was needed in order to assess the impact of higher ethanol blends including the compatibility of ethanol blends above 10% (E10+) with the existing fleet of vehicles and small engines. Through the Coordinating Research Council (CRC), the oil and auto industries developed and funded a comprehensive multi-year testing program prior to the biofuels industry’s E15 waiver application. API worked closely with the auto and off-road engine industries and with EPA and DOE to share and coordinate research plans. Yet, EPA approved the E15 waiver request before this research effort was finished and the results thoroughly evaluated. The potential for harm from that decision is substantial, as suggested by the results of various research studies, including testing performed by DOE’s National Renewal Energy Laboratory and by the CRC, have been completed to date. The DOE research shows an estimated half of existing service station pumping equipment may not be compatible with a 15% ethanol blend. The CRC research shows that E15 could also damage the engines of millions of cars and light trucks.

E20 may have similar, if not worse, compatibility issues with engines and service station equipment.

JOSEPH H. PETROWSKI. Gulf Oil Group.

We are the Nation’s eighth largest convenience retailer of petroleum products and convenience items in over 13 States. Our wholesale oil division, Gulf Oil, carries and merchandises over 350,000 barrels of petroleum products and biofuels over 29 States, $13 billion revenue places us in the top 50 private companies in the country. We employ 8,000 employees,

We do not drill, we do not refine petroleum products. What we care to sell are products that our customers want to buy that are most economic for them to achieve their desired transport, heating, and other energy uses in a lawful manner.

We blend—in addition to selling petroleum products, which is our primary product that we sell, we blend over 1 million gallons a day of biofuels across our system, and just recently, we have purchased 24 Class A trucks to begin to fuel on natural gas to deliver our fuel products to our stations and stores.

We believe that a sound energy policy rests on four bedrocks. One is that we have diverse fuel sources, and there are two reasons for that. The future is unknowable. The new shale technology that has taken over the industry in natural gas was unheard of more than 2 decades ago. Technology and events are beyond our abilities to understand where we are going, and so to bet any of our future on one single source of fuel would be a mistake. We believe diversity in all systems ensures health and stability. And so we look for diversity in fuel, not only by fuel type, but to make sure that we are not concentrated in taking it from one region, particularly the Middle East and unstable regions.

I do want to point out to all the members that we have billions, hundreds of billions of dollars invested in terminals, gas stations, barges, transportation, and we have to live with the realities of the marketplace and the particulars.

America’s love affair with the automobile is not going away. Neither is the need for transportation fuels that underpin the economy and create jobs. In a country as vast as ours with a density of 79 people per square mile (as opposed to the Netherlands with 1300 people per square mile), the cost of transport is central to economic health.

When total national energy costs exceed 16% of GDP a recession or worse is almost always the result. The United States’ current accounts trade balance for all energy products recently exceeded $1 trillion dollars, and while it has currently been reduced to one half that amount on an annualized basis we look forward to the day when the United States is a net energy exporter. Not only will that be positive to GDP and job growth, but it will position us to revitalize our industrial production, especially in energy-intensive industries with an eye toward value added product exports. And no policy would be more beneficial for the spread of world democracy

Our industry is dominated by small businesses. In fact, of the 120,950 convenience stores that sell fuel, almost sixty percent of them are single-store companies – true mom and pop operations. Many of these companies sell fuel under the brand name of their fuel supplier. This has created a common misperception in the minds of many policymakers and consumers that the large integrated oil companies own these stations. The reality is that the majors are leaving the retail marketplace and today own and operate fewer than 2% of the retail locations. Although a store may sell a particular brand of fuel associated with a refiner, the vast majority are independently owned and operated like mine. When people pull into an Exxon or a BP station, the odds are good that they are in fact refueling at a small mom-and-pop operation.

THE BLEND WALL AND THE NEED FOR A CONGRESSIONAL FIX. Since the enactment of the Energy Independence and Security Act (EISA) of2007, we have heard much about the impending arrival of the so-called “blend wall” – the point at which the market cannot absorb any additional renewable fuels. Most of the fuel sold in the United States today is blended with 10% ethanol. If 10% ethanol were blended into every gallon of gasoline sold in the nation in 2011 (33.9 billion gallons), the market would reach a maximum of 13.39 billion gallons. However, the 2012 statutory mandate for the RFS is 15.2 billion gallons. Meanwhile, the market for higher blends of ethanol (E85) for flexible fuel vehicles (FFVs) has not developed as rapidly as some had hoped. Clearly, we have reached the blend wall.

EPA recently authorized the use ofE15 in certain vehicles. However, this has so far done very little to expand the use of renewable fuels, due largely to retailers’ liability and compatibility concerns, as well as state and local restrictions on selling E15. Congress can do something immediately to mitigate other obstacles preventing new fuels from entering the market. H.R. 4345, the Domestic Fuels Protection Act of 2012-currentiy before the subcommittee on Environment and the Economy-addresses three of these obstacles: infrastructure compatibility, liability for consumer misuse of fuels, and retroactive liability of the rules governing a fuel change in the future.

The reason the retail market is unable to easily accommodate additional volumes of renewable fuels begins with the equipment found at retail stations. By law, all equipment used to store and dispense flammable and combustible liquids must be certified by a nationally recognized testing laboratory. These requirements are found in regulations of the Occupational Safety and Health Administration. Currently, there is essentially only one organization that certifies such equipment, Underwriters Laboratories (UL). UL establishes specifications for safety and compatibility and runs tests on equipment submitted by manufacturers for UL listing. Once satisfied, UL lists the equipment as meeting a certain standard for a certain fuel. Prior to 20I0, UL had not listed a single motor fuel dispenser (aka a gas pump) as compatible with any fuel containing more than 10% ethanol. This means that any dispenser in the market prior to early 20lOis not legally permitted to sell E15, E85 or anything above 10% ethanol – even if it is able to do so safely.

If a retailer fails to use listed equipment, that retailer is violating OSHA regulations and -may be violating tank insurance policies, state tank fund program requirements, bank loan covenants, and potentially other local regulations. In addition, the retailer could be found negligent per se based solely on the fact that his fuel dispensing system is not listed by UL. This brings us to the primary challenge: if no dispenser prior to early 20I0 was listed as compatible with fuels containing greater than ten percent ethanol, what options are available to retailers to sell these fuels? In order to comply with the law, retailers wishing to sell E I 0+ fuels can only use equipment specifically listed by UL as compatible with such fuels. Because UL did list any equipment as compatible with E10+ fuels until 2010, only those units produced after that date can legally sell E I 0+ fuels. All previously manufactured devices, even if they are the exact same model using the exact same materials, are subject only to the UL listing available at the time of manufacture. (UL policy prevents retroactive certification of equipment.)

Practically speaking, this means that a vast majority of retailers wishing to sell EIO+ fuels must replace their dispensers. This costs an average of $20,000 per dispenser. It is less clear how many underground storage tanks and associated pipes and lines would require replacement. Many of these units are manufactured to be compatible with high concentrations of ethanol, but they may not be listed as such. Further, if there are concerns with gaskets and seals in dispensers, care must be given to ensure the underground gaskets and seals do not pose a threat to the environment. Once a retailer begins to replace underground equipment, the cost can escalate rapidly and can easily exceed $100,000 per location.

The second major issue facing retailers is the potential liability associated with improperly fueling an engine with a non-approved fuel. The EPA decision concerning EI5 puts this issue into sharp focus for retailers. Under EPA’s partial waiver, only vehicles manufactured in model year 2001 or more recently are authorized to fuel with E15. Older vehicles, motorcycles, boats, and small engines are not authorized to use E15. For the retailer, bifurcating the market in this way presents serious challenges. For instance, how does the retailer prevent the consumer from buying the wrong fuel? Typically, when new fuels are authorized they are backwards compatible so this is not a problem. In other words, older vehicles can use the new fuel. When EPA phased lead out of gasoline in the late I 970s and early 1980s, for example, older vehicles were capable of running on unleaded fuel newer vehicles, however, were required to run only on unleaded. These newer vehicle gasoline tanks were equipped with smaller fill pipes into which a leaded nozzle could not fit – likewise, unleaded dispensers were equipped with smaller nozzles. E 15 is very different: legacy engines are not permitted to use the new fuel. Doing so will violate Clean Air Act standards and could cause engine performance or safety issues. Yet there are no viable options to retroactively install physical counter measures to prevent misfueling.

Retailers could be subject to penalties under the Clean Air Act for not preventing a customer from misfueling with E15. This concern is not without justification. In the past, retailers have been held accountable for the actions of their customers. For example, because unleaded fuel was more expensive than leaded fuel, some consumers physically altered their vehicle fill pipes to accommodate the larger leaded nozzles either by using can openers or by using a funnel while fueling. We may see similar behavior in the future given the high price of gasoline relative to ethanol. As in the past, the retailer will not be able to prevent such practices, but in the case of leaded gasoline the EPA levied fines against the retailer for not physically preventing the consumer from bypassing the misfueling counter measures. To EPA’s credit, they have asserted in meetings with NACS and SIGMA that they would not be targeting retailers for consumer misfueling. But that provides little comfort to retailers. EPA policy can change in the absence of specific legal safeguards. Additionally, the Clean Air Act includes a private right of action and any citizen can file a lawsuit against a retailer that does not prevent misfueling. Whether the retailer is found guilty does not change the fact that defending against such claims is very expensive. Further, the consumer may seek to hold the retailer liable for their own actions. Using the wrong fuel could void an engine’s warranty, cause engine performance problems or even compromise the safety of some equipment. In all situations, some consumers may seek to hold the retailer accountable even when the retailer was not responsible for the improper use of the fuel. Once again, defending such claims is expensive.

An EPA decision to approve E15 for 2001 and newer vehicles is not consistent with the terms of most warranty policies issued with these affected vehicles. Consequently, while using E15 in a 2009 vehicle might be lawful under the Clean Air Act, it may in fact void the warranty of the consumer’s vehicle. Retailers have no mechanism for ensuring that consumers abide by their vehicle warranties – it is the consumer’s responsibility to comply with the terms of their contract with their vehicle manufacturer. Therefore, H.R. 4345 stipulates that no person shall be held liable in the event a self-service customer introduces a fuel into their vehicle that is not covered by their vehicle warranty.

General Liability Exposure Finally, there are widespread concerns throughout the retail community and with our product suppliers that the rules of the game may change and we could be left exposed to significant liability. For example, EI5 is approved only for certain engines and its use in other engines is prohibited by the EPA due to associated emissions and performance issues. What if E 15 does indeed cause problems in non-approved engines or even in approved engines? What if in the future the product is determined defective, the rules are changed and E 15 is no longer approved for use in commerce? There is significant concern that such a change in the law would be retroactively applied to anyone who manufactured, distributed, blended or sold the product in question.

Contrary to popular misconception, fuel marketers prefer cheap gasoline. The less the consumer pays at the pump, the more money the consumer has to spend in our stores, where our profit margins are significantly greater.

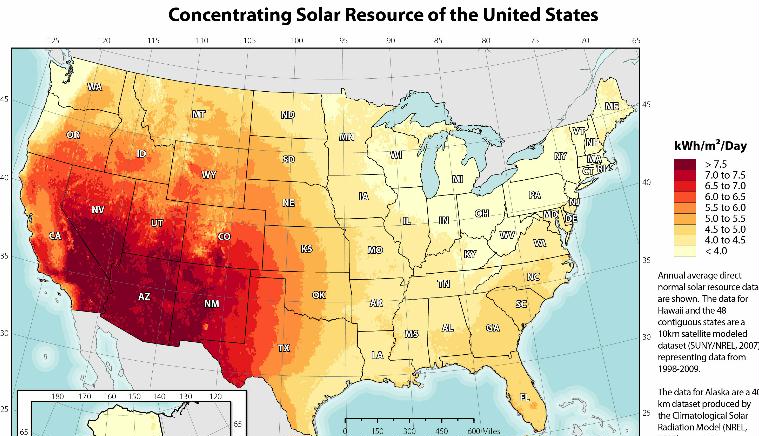

![Concentrating Solar Power Average Daily Solar Radiation Per Month, 1961]1990 (NREL 2011b)](https://energyskeptic.com/wp-content/uploads/2015/03/CSP-seasonal-6-USA-maps-jan-mar-may-jul-sep-nov.jpg)

Preface. Since 90% of international goods move by ships, I was curious about how much fuel they burned. It’s a lot: The very large container ship CMA CGM Benjamin Franklin above, which can carry 18,000 20-foot containers, carries approximately 4.5 million gallons of fuel oil, which takes up 16,000 cubic meters (FW 2020). As much fuel as 300,000 15-gallon tank cars.

Preface. Since 90% of international goods move by ships, I was curious about how much fuel they burned. It’s a lot: The very large container ship CMA CGM Benjamin Franklin above, which can carry 18,000 20-foot containers, carries approximately 4.5 million gallons of fuel oil, which takes up 16,000 cubic meters (FW 2020). As much fuel as 300,000 15-gallon tank cars.