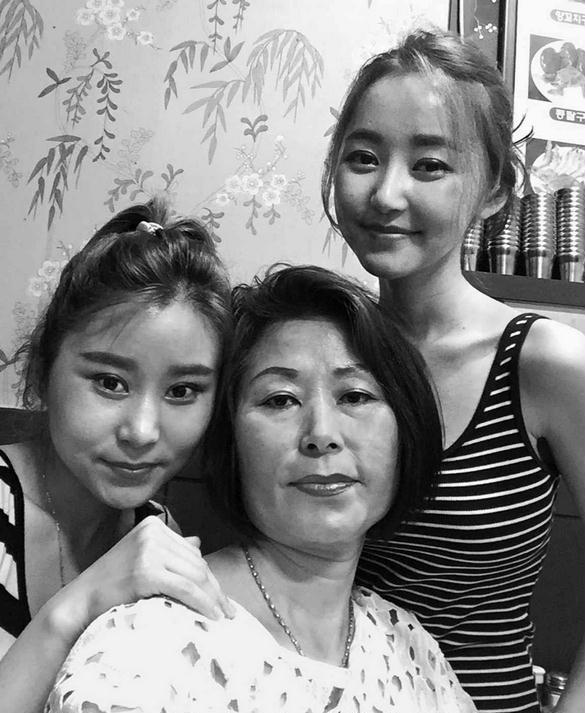

From left to right: Eunmi (sister), mother, and author Yeonmi Park. Seoul 2015

Related Posts

Alice Friedemann www.energyskeptic.com Author of Life After Fossil Fuels: A Reality Check on Alternative Energy; When Trucks Stop Running: Energy and the Future of Transportation”, Barriers to Making Algal Biofuels, & “Crunch! Whole Grain Artisan Chips and Crackers”. Women in ecology Podcasts: WGBH, Jore, Planet: Critical, Crazy Town, Collapse Chronicles, Derrick Jensen, Practical Prepping, Kunstler 253 &278, Peak Prosperity, Index of best energyskeptic posts

***

Park, Yeonmi. 2015. In Order to Live: A North Korean Girl’s Journey to Freedom. With Maryanne Vollers, Penguin Press.

Excerpts from this 290-page book (some of it reworded/shortened):

INTRODUCTION TO LIFE IN NORTH KOREA

I wasn’t dreaming of freedom when I escaped from North Korea. I didn’t even know what it meant to be free. All I knew was that if my family stayed behind, we would probably die—from starvation, from disease, from the inhuman conditions of a prison labor camp. The hunger had become unbearable; I was willing to risk my life for the promise of a bowl of rice.

In the North there are no words for things like “shopping malls,” “liberty,” or even “love,” at least as the rest of the world knows it. The only true “love” we can express is worship for the Kims, a dynasty of dictators who have ruled North Korea for three generations. The regime blocks all outside information, all videos and movies, and jams radio signals. There is no World Wide Web and no Wikipedia. The only books are filled with propaganda telling us that we live in the greatest country in the world, even though at least half of North Koreans live in extreme poverty and many are chronically malnourished.

My former country doesn’t even call itself North Korea—it claims to be Chosun, the true Korea, a perfect socialist paradise where 25 million people live only to serve the Supreme Leader, Kim Jong Un.

Once the sun went down, you couldn’t see anything at all. In our part of North Korea, it was normal to go for weeks and even months without any electricity, and candles were very expensive.

The unpaved lanes between houses were too narrow for cars, although this wasn’t much of a problem because there were so few cars. People in our neighborhood got around on foot, or for the few who could afford one, on bicycle or motorbike.

You could find a few manufactured dolls and other toys in the market, but they were usually too expensive. Instead we made little bowls and animals out of mud, and sometimes even miniature tanks; homemade military toys were very big in North Korea. But we girls were obsessed with paper dolls and spent hours cutting them out of thick paper, making dresses and scarves for them out of scraps.

There weren’t garbage trucks churning, horns honking, or phones ringing everywhere. All I could hear were the sounds people were making: women washing dishes, mothers calling their children, the clink of spoons and chopsticks on rice bowls

There was no music blaring in the background, no eyes glued to smartphones back then. But there was human intimacy and connection, something that is hard to find in the modern world I inhabit today.

At our house in Hyesan, our water pipes were almost always dry, so my mother usually carried our clothes down to the river and washed them there. When she brought them back, she put them on the warm floor to dry.

Because electricity was so rare in our neighborhood, whenever the lights came on people were so happy they would sing and clap and shout. Even in the middle of the night, we would wake up to celebrate. When you have so little, just the smallest thing can make you happy—and that is one of the very few features of life in North Korea that I actually miss. Of course, the lights would never stay on for long. When they flickered off, we just said, “Oh, well,” and went back to sleep.

Even when the electricity came on the power was very low, so many families had a voltage booster to help run the appliances. These machines were always catching on fire, and one March night it happened at our house while my parents were out. I was just a baby, and all I remember is waking up and crying while someone carried me through the smoke and flames.

Our home was destroyed by the fire, but right away my father rebuilt it with his own hands. After that, we planted a garden in our small fenced yard. My mother and sister weren’t interested in gardening, but my father and I loved it. We put in squash and cabbage and cucumbers and sunflowers. My father also planted beautiful fuchsia flowers we called “ear drops” along the fence. I adored draping the long delicate blossoms from my ears and pretending they were earrings.

The best day of every month was Noodle Day, when my mother bought fresh, moist noodles that were made in a machine in town. We wanted them to last a long time, so we spread them out on the warm kitchen floor to dry. It was like a holiday for my sister and me because we would get to sneak a few noodles and eat them while they were still soft and sweet. In the earliest years of my life, before the worst of the famine that struck North Korea in the mid-1990s had gripped our city, our friends would come around and we would share the noodles with them. In North Korea, you are supposed to share everything. But later, when times were much harder for our family and for the country, my mother told us to chase the children away. We couldn’t afford to share anything. During the good times, a family meal would consist of rice, kimchi, some kind of beans, and seaweed soup. But those things were too expensive to eat during the lean times. Sometimes we would skip meals, and often all we had to eat was a thin porridge of wheat or barley, beans, or black frozen potatoes ground and made into cakes filled with cabbage.

I was too young to realize how desperate things were becoming in the grown-up world, as my family tried to adapt to the massive changes in North Korea during the 1990s. After my sister and I were asleep, my parents would sometimes lie awake, sick with worry, wondering what they could do to keep us all from starving to death.

Anything I did overhear, I learned quickly not to repeat. I was taught never to express my opinion, never to question anything. I was taught to simply follow what the government told me to do or say or think. I actually believed that our Dear Leader, Kim Jong Il, could read my mind, and I would be punished for my bad thoughts. And if he didn’t hear me, spies were everywhere, listening at the windows and watching in the school yard. We all belonged to inminban, or neighborhood “people’s units,” and we were ordered to inform on anyone who said the wrong thing. We lived in fear, and almost everyone—my mother included—had a personal experience that demonstrated the dangers of talking.

I was only nine months old when Kim Il Sung died on July 8, 1994. North Koreans worshipped the 82-year-old “Great Leader.” At the time of his death, Kim Il Sung had ruled North Korea with an iron grip for almost five decades, and true believers—my mother included—thought that Kim Il Sung was actually immortal. His passing was a time of passionate mourning, and also uncertainty in the country.

During that time, one of my father’s relatives was visiting from northeast China. The real cause of death, he said, was hwa-byung—a common diagnosis in both North and South Korea that roughly translates into “disease caused by mental or emotional stress.

When I was growing up, we didn’t talk about what our families did during those times. In North Korea, any history can be dangerous. What I know about my father’s side of the family comes from the few stories my father told my mother.

After the Japanese surrendered on August 15, 1945, the Soviet army swept into the northern part of Korea, while the American military took charge of the South—and this set the stage for the agony my country has endured for more than seventy years. An arbitrary line was drawn along the 38th parallel, dividing the peninsula into two administrative zones: North and South Korea. The United States flew an anti-Communist exile named Syngman Rhee into Seoul and ushered him into power as the first president of the Republic of Korea. In the North, Kim Il Sung, who had by then become a Soviet major, was installed as leader of the Democratic People’s Republic of Korea, or DPRK. The Soviets quickly rounded up all eligible men to establish a North Korean military force. My grandfather was taken from his job at city hall and turned into an officer in the People’s Army.

By 1949, both the United States and the Soviet Union had withdrawn their troops and turned the peninsula over to the new puppet leaders. It did not go well. Kim Il Sung was a Stalinist and an ultranationalist dictator who decided to reunify the country in the summer of 1950 by invading the South with Russian tanks and thousands of troops. In North Korea, we were taught that the Yankee imperialists started the war, and our soldiers gallantly fought off their evil invasion. In fact, the United States military returned to Korea for the express purpose of defending the South—bolstered by an official United Nations force—and quickly drove Kim Il Sung’s army all the way to the Yalu River, nearly taking over the country. They were stopped only when Chinese soldiers surged across the border and fought the Americans back to the 38th parallel. By the end of this senseless war, at least three million Koreans had been killed or wounded, millions were refugees, and most of the country was in ruins.

In 1953, both sides agreed to end the fighting, but they never signed a peace treaty. To this day we are still officially at war, and both the governments of the North and South believe that they are the legitimate representatives of all Koreans.

During the 1950s and 1960s, China and the Soviet Union poured money into North Korea to help it rebuild. The North has coal and minerals in its mountains, and it was always the richer, more industrialized part of the country. It bounced back more quickly than the South, which was still mostly agricultural and slow to recover from the war. But that started to change in the 1970s and 1980s, as South Korea became a manufacturing center and North Korea’s Soviet-style system began to collapse under its own weight. The economy was centrally planned and completely controlled by the state. There was no private property—at least officially—and all the farms were collectivized, although people could grow some vegetables to sell in small, highly controlled markets. The government provided all jobs, paid everyone’s salary, and distributed rations for most food and consumer goods.

While my parents were growing up, the distribution system was still subsidized by the Soviet Union and China, so few people were starving, but nobody outside the elite really prospered. At the same time, supply wasn’t meeting demand for the kinds of items people wanted, like imported clothing, electronics, and special foods. While the favored classes had access to many of these goods through government-run department stores, the prices were usually too high for most people to afford. Any ordinary citizen who fancied foreign cigarettes or alcohol or Japanese-made handbags would have to buy them on the black market. The usual route for those goods was from the north, through China.

My father’s older brother Park Jin was attending medical school in Hyesan, and his eldest brother, Park Dong Il, was a middle school teacher in Hamhung. Disaster struck in 1980 when Dong Il was accused of raping one of his students and attempting to kill his wife. I never learned all the details of what happened, or even if the charges were true, but he ended up being sentenced to twenty years of hard labor. In North Korea, if one member of the family commits a serious crime, everybody is considered a criminal. Suddenly my father’s family lost its favorable social and political status.

There are more than 50 subgroups within the main songbun castes, and once you become an adult, your status is constantly being monitored and adjusted by the authorities. A network of casual neighborhood informants and official police surveillance ensures that nothing you do or your family does goes unnoticed. Everything about you is recorded and stored in local administrative offices and in big national organizations, and the information is used to determine where you can live, where you can go to school, and where you can work. With a superior songbun, you can join the Workers’ Party, which gives you access to political power. You can go to a good university and get a good job. With a poor one, you can end up on a collective farm chopping rice paddies for the rest of your life. And, in times of famine, starving to death.

All of Grandfather Park’s connections could not save his career after his eldest son was convicted of attempted murder. He was fired from his job at the commissary shortly after Dong Il was sent to prison.

My father realized he would have no future unless he found a way to join the Workers’ Party. He decided to become a laborer at a local metal foundry where he could work hard and prove his loyalty to the regime. He was able to build good relationships with the people who had power at his workplace, including the party representative there. Before long, he had his membership. By that time, my father had also started a side business to make some extra money. This was a bold move, because any business venture outside of state control was illegal. But my father was unusual in that he had a natural entrepreneurial spirit and what some might call a healthy contempt for rules.

My father joined a small but growing class of black market operators who found ways to exploit cracks in the state-controlled economy. He started small. My father discovered that he could buy a carton of top-quality cigarettes for 70 to 100 won on the black market in Hyesan, then sell each cigarette for 7 to 10 won in the North Korean interior. At that time, a kilogram—2.2 pounds—of rice cost around 25 won, so cigarettes were obviously very valuable.

My father would go to the police and bribe them for a travel permit. My father traveled by train to small cities where there weren’t big black markets. He hid the cigarettes in his bags, all over his body, and in every pocket. He had to keep moving to avoid being searched by the police, who were always looking for contraband. Sometimes the police discovered him and confiscated the cigarettes or threatened to hit him with a metal stick if he didn’t turn over his money. My father had to convince the police that it was in everybody’s interest to let him make a profit so that he could keep coming back and giving them cigarettes as bribes. Often they agreed. He was a born salesman.

Father left the metal foundry to find other jobs that gave him more freedom to be away from the office for days at a time to run his businesses. In addition to the cigarettes, he bought sugar, rice, and other goods in the informal markets in Hyesan, then traveled around the country selling them for a profit. When he did business in Wonsan, a port on the East Sea, he brought back dried sand eels—tiny, skinny fish that Koreans love to eat as a side dish. He could sell them for a good profit in our landlocked province, and they became his best-selling product.

While it’s true that my grandfather and my parents stole from the government, the government stole everything from its people, including their freedom. As it turns out, my family’s business was simply ahead of its time. By the time I was born, in 1993, corruption, bribery, theft, and even market capitalism were becoming a way of life in North Korea as the centralized economy fell apart. The only thing left unchanged when the crisis was over was the regime’s brutal, totalitarian grip on political power.

We think I was even more premature because, in her seventh month, my mother was hauling coal across a railroad bridge in Hyesan. The coal transport was part of a backdoor enterprise run by my grandfather Park. After he lost his job at the commissary, my grandfather found work as a security guard at a military facility in Hyesan. The building had a stockpile of coal in one storage area, and he would let my parents in to steal it. They had to sneak in at night and carry the coal on their backs through the darkened city. It was hard work and they had to move fast, because if they were caught by the wrong policeman—meaning one they couldn’t bribe—they might end up getting arrested.

The regime realized it had no choice but to tolerate these unofficial markets.

The new reality spelled disaster for my father. Now that everyone was buying and selling in the markets, called jangmadangs, there was too much competition for him to make a living. Meanwhile, penalties for black market activities grew harsher. As hard as my parents tried to adapt, they were having trouble selling their goods and were falling deeper into debt. My father tried different kinds of businesses. My mother and her friends had an ancient pedal sewing machine they used to patch together pieces of old clothes to make children’s clothing. My mother dressed my sister and me in these outfits; her friends sold the rest in the market.

Some people had relatives in China, and they could apply for permits to visit them. My uncle Park Jin did this at least once, but my father didn’t because the authorities frowned on it and would have paid closer attention to his business. Those who went almost always came back across the border with things to sell in makeshift stalls on the edges of the jangmadang.

My father met his friend’s younger sister, Keum Sook. My mother. She was four years younger than my father, and her songbun status was just as poor as his, also through no fault of her own. While my father had to struggle because his brother was in prison, she was considered untrustworthy because her paternal grandfather had owned land when Korea was a Japanese colony. The stigma passed down through three generations, and when my mother was born in 1966, she was already considered a member of the “hostile” class and barred from the privileges of the elite.

The United States dropped more bombs on North Korea than it had during the entire Pacific campaign in World War II. The Americans bombed every city and village, and they kept bombing until there were no major buildings left to destroy. Then they bombed the dams to flood the crops. The damage was unimaginable, and nobody knows how many civilians were killed and maimed.

My parents knew that with each passing month it was getting harder and harder to survive in North Korea, but they didn’t know why. Foreign media were completely banned in the country, and the newspapers reported only good news about the regime—or blamed all of our hardships on evil plots by our enemies. The truth was that outside our sealed borders, the Communist superpowers that created North Korea were cutting off its lifeline. The big decline started in 1990 when the Soviet Union was breaking apart and Moscow dropped its “friendly rates” for exports to North Korea. Without subsidized fuel and other commodities, the economy creaked to a halt.

There was no way for the government to keep the domestic fertilizer factories running, and no fuel for trucks to deliver imported fertilizer to farms. Crop yields dropped sharply. At the same time, Russia almost completely cut off food aid. China helped out for a few years, but it was also going through big changes and increasing its economic ties with capitalist countries—like South Korea and the United States—so it, too, cut off some of its subsidies and started demanding hard currency for exports. North Korea had already defaulted on its bank loans, so it couldn’t borrow a penny.

Instead of changing its policies and reforming its programs, North Korea responded by ignoring the crisis. Instead of opening the country to full international assistance and investment, the regime told the people to eat only two meals a day to preserve our food resources. In his New Year’s message of 1995, the new Dear Leader, Kim Jong Il, called on the Korean people to work harder. Although 1994 had brought us “tears of blood,” he wrote, we should greet 1995 “energetically, single-mindedly, and with one purpose”—to make the motherland more prosperous.

Our problems could not be fixed with tears and sweat, and the economy went into total collapse after torrential rains caused terrible flooding that wiped out most of the rice harvest. Kim Jong Il described our national struggle against famine as “The Arduous March,” resurrecting the phrase used to describe the hardships his father’s generation had faced fighting against the Japanese imperialists. Meanwhile as many as a million North Koreans died from starvation or disease during the worst years of the famine.

HOW TO SURVIVE

When foreign food aid finally started pouring into the country to help famine victims, the government diverted most of it to the military, whose needs always came first. What food did get through to local authorities for distribution quickly ended up being sold on the black market. Suddenly almost everybody in North Korea had to learn to trade or risk starving to death.

The regime realized it had no choice but to tolerate these unofficial markets.

The new reality spelled disaster for my father. Now that everyone was buying and selling in the markets, called jangmadangs, there was too much competition for him to make a living. Meanwhile, penalties for black market activities grew harsher. As hard as my parents tried to adapt, they were having trouble selling their goods and were falling deeper into debt. My father tried different kinds of businesses. My mother and her friends had an ancient pedal sewing machine they used to patch together pieces of old clothes to make children’s clothing. My mother dressed my sister and me in these outfits; her friends sold the rest in the market.

Some people had relatives in China, and they could apply for permits to visit them. My uncle Park Jin did this at least once, but my father didn’t because the authorities frowned on it and would have paid closer attention to his business. Those who went almost always came back across the border with things to sell in makeshift stalls on the edges of the jangmadang.

Despite North Korea’s anti-capitalist ideals, there were lots of private lenders who got rich by loaning money for monthly interest. My parents borrowed from some of them to keep their business going, but after black market prices collapsed and a lot of their merchandise was confiscated or stolen, they couldn’t pay it back. Every night, the people who wanted to collect their debts came to the house while we were eating our meal. They yelled and made threats.

Finally, my father decided he couldn’t take it anymore. He knew of another way to make money, but it was very dangerous. He had a connection in Pyongyang who could get him some valuable metals—like gold, silver, copper, nickel, and cobalt—that he could sell to the Chinese for a profit.

My mother was against it. When he was selling sand eels and cigarettes, the worst that could happen was that he might have to spend all his profits on bribes, or do a short time in a reeducation camp.

“But smuggling stolen metals could get you killed.” She was even more frightened when she learned how he intended to bring the contraband to Hyesan. Every passenger train in North Korea had a special cargo car attached at the end of it called Freight Train #9. These #9 trains were exclusively for the use of Kim Jong Il to bring him specialty foods, fruits, and precious materials from different parts of North Korea, and to distribute gifts and necessity items to cadres and party officials around the country. Everything shipped in the special car was sealed in wooden crates that even the police couldn’t open to inspect. Nobody could even enter the car without being searched. My father knew somebody who worked on the train, and that man agreed to help smuggle the metals from Pyongyang to Hyesan in one of these safe compartments.

Between 1998 and 2002, my father spent most of his time in Pyongyang running the smuggling business. Usually he would be gone for nine months of the year,

When he was in town, my father entertained at our house to keep the local officials happy, including the party bosses he paid to ignore his absences from his “official” workplace.

My mother cooked big meals of rice and kimchi, grilled meat called bulgogi, and other special dishes, while my father filled everyone’s glasses to the brim with rice vodka and imported liquor. My father was a captivating storyteller with a great sense of humor.

The smugglers who brought the black market goods back and forth to China lived in low houses behind the market, along the river’s edge. I got to know this neighborhood well. When my father was in town with a shipment from Pyongyang, he would sometimes hide the metal in my little book bag, and then carry me piggyback from our house to one of the smugglers’ shacks. From there some men took the package to Chinese buyers on the other side of the river. Sometimes the smugglers would wade or walk across the Yalu River, sometimes they met their Chinese counterparts halfway. They did it at night, signaling one another with flashlights. There were so many of them doing business that each needed a special code—one, two, three flashes—so they didn’t get one another mixed up.

The soldiers who guarded the border were part of the operation by now, and they were always there to take their cut. Of course, even with the authorities looking the other way, there still were many things that you were forbidden to buy or sell. And breaking the rules could be fatal.

My own family suffered, too, as our fortune rose and fell like a cork in the ocean. In 1999, my father tried to use trucks instead of trains to smuggle metals out of Pyongyang, but there were too many expenses to pay drivers and buy gasoline, too many checkpoints and too many bribes to pay, so he ended up losing all of his money.

By the year 2000, when I turned seven years old, my father’s business was thriving. We had returned to Hyesan after my grandmother’s funeral and, before long, my family was rich—at least by our standards. We ate rice three times a day and meat two or three times a month. We had money for medical emergencies, new shoes, and things like shampoo and toothpaste that were beyond the means of ordinary North Koreans. We still didn’t have a telephone, car, or motorbike, but our lives seemed very luxurious to our friends and neighbors.

While my father was in prison, mother would come and go frequently over the next seven months, often for several weeks, doing business buying and selling watches, clothes, and used TV’s – things the government didn’t care much about if they caught you.

Nobody could afford to live on wages alone. Mother bought a stall in the market, which the government was now regulating and charging fees for, taking bribes for the best spots. My uncle’s wife started a business selling fish and rice cakes, but it wasn’t very profitable.

When my mother’s big sister saw how poorly we were doing, she took me her village deep in the countryside. There was rarely electricity and everyone lived as if electricity didn’t exist. The fanciest transportation was an oxcart. My aunt had lots of chickens and it was my job to watch the hens lay their eggs and make sure other chickens didn’t eat them and no one stole them. I also hauled wood from the forest. My aunt also grew grapes, corn, potatoes, peppers, and sweet potatoes. Pigs ate what we didn’t finish.

In 2004 my father was convicted in a secret trial and sentenced to hard labor at a felony-level prison camp for ten years. No one lived long in these places and everyone knew it because the regime wanted us to fear these camps where you were no longer considered a human being. Prisoners can’t look at guards because an animal can’t look a human in the face. No visits or letters were allowed. Days are spent in hard labor with just porridge to eat and at night crammed into small cells where they sleep like packed fish, head to toe. Only the strongest survive their sentences.

In North Korea schoolchildren are part of the unpaid labor force that keeps the nation from total collapse. In the afternoons we were marched off for manual labor. In spring we helped the collective farms with planting, carrying stones to clear the fields, putting in the corn and hauling water. In June and July we weeded, and in fall picked up rice, corn, or beans missed by harvesters. We were expected to turn in everything we picked, but we had ways of hiding some anyway. We wer also expected to collect rabbit for the the soldiers’ winter uniforms, 5 pelts per semester.

In Kowon my mother gave facial massages and eyebrow tattoos for women. She bought and sold video cassettes and TV’s on the black market, and bought and sold rabbit furs.

When I as 11 I started my own business. I bought rice vodka to bribe the guard at a state-owned orchard who let me and my sister sneak in and pick persimmons. But we were wearing out our shoes too quickly walking to the orchard and couldn’t afford new shoes.

HARD TIMES

While they worked to keep us from catastrophe, my parents often had to leave my sister and me alone. If she couldn’t find someone to look after us, my mother would have to bolt a metal bar across the door to keep us safe in the house. Sometimes she was away for so long that the sun went down and the house would get dark. After a while, I would lose my nerve and we’d cry together.

When you are always hungry, all you think about is food. We would eat only a little bit of porridge or potatoes.

The worst times were the winters. There was no running water and the river was frozen. There was one pump in town where you could collect fresh water, but you had to line up for hours to fill your bucket. One day when I was about five years old, my mother had to go off to do some business, so she took me there at six in the morning, when it was still dark, to wait in line for her. I stood outside all day in the freezing cold, and by the time she came back for me, it was dark again. I can remember how cold my hands were, and I can still see the bucket and the long line of people in front of me. She has apologized to me for doing that, but I don’t blame her for anything; it was what she had to do.

We arrived in Kowon to find that my mother’s family was also struggling to survive. Her youngest son, Jong Sik, who had been imprisoned years earlier for stealing from the state, was visiting them as well. In the labor camp he had caught tuberculosis, which was very common in North Korea. Now that there was so little food to go around, he was sick all the time and wasting away.

When my father was sober, he treated my mother like gold. But when he was drinking, it was a different story. North Korean society is by its nature tough and violent, and so are relations between men and women. The woman is expected to obey her father and her husband; males always come first in everything. When I was growing up, women could not sit at the same table with men. Many of my neighbors’ and classmates’ houses had special bowls and spoons for their fathers. It was commonplace for a husband to beat his wife. We had one neighbor whose husband was so brutal that she couldn’t click her chopsticks while she ate for fear he would hit her for making noise.

You hardly saw anyone begging in Pyongyang, just the street children we called kotjebi, who haunted the markets and train stations in every part of North Korea. The difference in Pyongyang was that whenever the kotjebi asked for food or money, the police officers came and drove them off. Whenever the train stopped, the kotjebi street children would climb up and knock on my window to beg. I could see them scrambling to pick up any spoiled food that people threw away, even moldy grains of rice. My father was worried that they would get sick eating bad food and told me we shouldn’t give them our garbage. I saw that some of those children were about my age, and many even younger. But I can’t say I felt compassion or even pity, just simple curiosity about how they managed to survive eating all that rotten food. As we pulled away from the station, some of them were still hanging on, holding tight to the undercarriage, using all their energy not to fall off the running train and looking up at me with eyes that had no curiosity or even anger. What I saw in them was a pure determination to live, an animal instinct for survival even when there seemed to be no hope.

My mother told her she had tried to call my father in Pyongyang but couldn’t reach him. That’s when she found out he had been arrested for smuggling. We walked her to the station, and as she was about to board the train, she gave us about 200 won, enough for a bit of dried beans or corn if we ran out of rice. “I’ll be back as soon as I can, and I’ll bring more food,” she said. Then she hugged us good-bye. We watched for a long time as the train pulled away. I was only eight years old, but I felt like my childhood was departing with her. She needed to travel to the capital to find out where my father was being held, and to see if she could pay enough money to get him out of jail.

Winter had arrived and the days got dark too quickly. The air was so cold that the door to our house kept freezing shut. And it was very difficult for us to figure out how to make a fire to heat the house and cook food. My mother had left us some firewood, but we weren’t very good at chopping it into small pieces. The ax was too heavy for me and I had no gloves. For a long time I picked splinters out of my hands. Early one evening, I was in charge of making the fire in the kitchen, but I used wet wood and it started to smoke too much. My sister and I were struggling to breathe, but we couldn’t open the doors or windows because they were frozen solid. We screamed and banged on the wall to our neighbor’s house, but nobody could hear us. I finally picked up the ax and broke the ice to open the door. It was a miracle we survived that terrible month. The food my mother left for us ran out quickly, and by the end of December we were nearly starving. Sometimes our friends’ mothers would feed us, but they were struggling, too. The famine was supposed to have ended in North Korea in the late 1990s, but life was still very hard, even years later.

While father was in prison, my sister and I had to drop out of school. Education is free, but students have to pay for their own supplies and uniforms, plus bring gifts of food and other items to the teachers. Besides, we had to spend all of our time just staying alive.

To wash our clothes and dishes we had to walk down to the river and break the ice. Most days we had to stand in line for tap water for cooking and drinking. The food mother left us never lasted long so we were very hungry and skinny.

In 2002-3 I had a painful rash, was dizzy, and had a bad stomach like many other children, caused by pellagra from a lack of niacin and other minerals. A starvation diet of corn and no meat will bring on the disease which can kill you in a few years.

Spring is the season of death when most people die of starvation, because stores of food are gone but farms produce nothing since new crops are just being planted. At least we didn’t need as much wood to burn and we could walk to the small mountains out of town and fill ourselves with bugs and wild plants. Some even tasted good, like wild clover flowers. We chewed on certain roots but didn’t swallow them just to feel like we were putting something in our mouths. Once we chewed on a root that made our tongues swell up – we were more careful than that.

We ate dragonflies using a plastic cigarette lighter to cook their heads. Later in summer we ate roasted cicadas, a gourmet treat. Grasshoppers were the best of all which we ate fried. I also picked some leaves for rabbits we could eat. We also ate wild clover flowers which were quite good, and the white flowers of the false acacia tree that grew wild in the mountains.

The hospital had no medicines, people had to buy them on the black market themselves, though in rural areas there was no black market for drugs, and many people had to walk over mountains and distances an oxcart couldn’t journey, leaving most helpless in an emergency. In the country doctors grew medicinal plants and cotton for bandages. Even in the city bandages were washed and reused, the same syringes used over and over.

We moved to Kowon where people were friendlier and there were fewer thieves. In the larger city of Hyesan there was a lot of crime when the economy collapsed and we had to hide our property behind locked doors, and dry our clothes indoors because anything left outside would be stolen. Especially dogs which are a food item in North Korea.

In Hyesan we would not share our food with neighbors, but in Kowon everyone shared with each other. You had no choice.

The power grid in the north had become so weak the train from Pyongyang had to stop before it got to Hyesan and turn around. After a while it stopped coming at all. So father could no longer bring metal from Pyongyang. My parents had nothing to sell and no one would loan them money.

In the winter our apartment was cold so father walked to the mountains every day to look for wood to keep us warm, eating snow to fill himself up. We were hungry all the time. Skipping a meal could literally mean death, so that became my biggest fear and obsession. My parents couldn’t sleep. They were afraid they might not wake up and their children starve to death.

THE STATE

The only books available in North Korea were published by the government and had political themes. Instead of scary fairy tales, we had stories set in a filthy and disgusting place called South Korea, where homeless children went barefoot and begged in the streets. It never occurred to me until after I arrived in Seoul that those books were really describing life in North Korea. Most of them were about our Leaders and how they worked so hard and sacrificed so much for the people. One of my favorites was a biography of Kim Il Sung. It described how he suffered as a young man while fighting the Japanese imperialists, surviving by eating frogs and sleeping in the snow.

Our Dear Leader had mystical powers. His biography said he could control the weather with his thoughts, and that he wrote fifteen hundred books during his three years at Kim Il Sung University. Even when he was a child he was an amazing tactician, and when he played military games, his team always won because he came up with brilliant new strategies every time.

In school, we sang a song about Kim Jong Il and how he worked so hard to give our laborers on-the-spot instruction as he traveled around the country, sleeping in his car and eating only small meals of rice balls. “Please, please, Dear Leader, take a good rest for us!” we sang through our tears. “We are all crying for you.” This worship of the Kims was reinforced in documentaries, movies, and shows broadcast by the single, state-run television station. Whenever the Leaders’ smiling pictures appeared on the screen, stirring sentimental music would build in the background. It made me so emotional every time. North Koreans are raised to venerate our fathers and our elders; it’s part of the culture we inherited from Confucianism. And so in our collective minds, Kim Il Sung was our beloved grandfather and Kim Jong Il was our father.

Our classrooms and schoolbooks were plastered with images of grotesque American GIs with blue eyes and huge noses executing civilians or being vanquished with spears and bayonets by brave young Korean children. Sometimes during recess from school we lined up to take turns beating or stabbing dummies dressed up like American soldiers.

In North Korea, even arithmetic is a propaganda tool. A typical problem would go like this: “If you kill one American bastard and your comrade kills two, how many dead American bastards do you have?

We could never just say “American”—that would be too respectful. It had to be “American bastard,” “Yankee devil,” or “big-nosed Yankee.” If you didn’t say it, you would be criticized for being too soft on our enemies.

The newsreaders were going on and on about how much the Dear Leader was suffering in the cold to give his benevolent guidance to the loyal soldiers when my father snapped, “That son of a bitch! Turn off the TV.” My mother whispered furiously, “Be careful what you say around the children! This isn’t just about what you think. You’re putting all of us in danger.

The next day mother and her best friend were visiting the monument to place more flowers when they noticed someone had vandalized the offerings. “Oh, there are such bad people in this world!” her friend said. “You are so right!” my mother said. “You wouldn’t believe the evil rumor that our enemies have been spreading.” And then she told her friend about the lies she had heard. The following day she was walking across the Cloud Bridge when she noticed an official-looking car parked in the lane below our house, and a large group of men gathered around it. She immediately knew something awful was about to happen.

The visitors were plainclothes agents of the dreaded bo-wi-bu, or National Security Agency, that ran the political prison camps and investigated threats to the regime. Everybody knew these men could take you away and you would never be heard from again.

The senior agent met my mother at our door and led her to our neighbor’s house, which he had borrowed for the afternoon. They both sat, and he looked at her with eyes like black glass. “Do you know why I’m here?” he asked. “Yes, I do,” she said. “So where did you hear that?” he said. She told him she’d heard the rumor from her husband’s Chinese uncle, who had heard it from a friend. “What do you think of it?” he said. “It’s a terrible, evil rumor!” she said, most sincerely. “It’s a lie told by our enemies who are trying to destroy the greatest nation in the world!” “What do you think you have done wrong?” he said, flatly. “Sir, I should have gone to the party organization to report it. I was wrong to just tell it to an individual.” “No, you are wrong,” he said. “You should never have let those words out of your mouth.” Now she was sure she was going to die.

When my mother sent me off to school she never said, “Have a good day,” or even, “Watch out for strangers.” What she always said was, “Take care of your mouth.” In most countries, a mother encourages her children to ask about everything, but not in North Korea. As soon as I was old enough to understand, my mother warned me that I should be careful about what I was saying. “Remember, Yeonmi-ya,” she said gently, “even when you think you’re alone, the birds and mice can hear you whisper.” She didn’t mean to scare me, but I felt a deep darkness and horror inside me.

There are more than fifty subgroups within the main songbun castes, and once you become an adult, your status is constantly being monitored and adjusted by the authorities. A network of casual neighborhood informants and official police surveillance ensures that nothing you do or your family does goes unnoticed. Everything about you is recorded and stored in local administrative offices and in big national organizations, and the information is used to determine where you can live, where you can go to school, and where you can work. With a superior songbun, you can join the Workers’ Party, which gives you access to political power. You can go to a good university and get a good job. With a poor one, you can end up on a collective farm chopping rice paddies for the rest of your life. And, in times of famine, starving to death.

In North Korea, public executions were used to teach us lessons in loyalty to the regime and the consequences of disobedience. In Hyesan when I was little, a young man was executed right behind the market for killing and eating a cow. It was a crime to eat beef without special permission. Cows were the property of the state, and were too valuable to eat because they were used for plowing fields and dragging carts, so anybody who butchered one would be stealing government property.

Although many families owned televisions, radios, and VCR players, they were allowed to listen to or watch only state-generated news programs and propaganda films, which were incredibly boring. There was a huge demand for foreign movies and South Korean television shows, even though you never knew when the police might raid your house searching for smuggled media. First they would shut off the electricity (if the power was on in the first place) so that the videocassette or DVD would be trapped in the machine when they came through the door. But people learned to get around this by owning two video players and quickly switching them out if they heard a police team coming. If you were caught smuggling or distributing illegal videos, the punishment could be severe.

Radios and televisions came sealed and permanently tuned to state-approved channels. If you tampered with them, you could be arrested and sent to a labor camp for reeducation, but a lot of people did it anyway.

I’m often asked why people would risk going to prison to watch Chinese commercials or South Korean soap operas or year-old wrestling matches. I think it’s because people are so oppressed in North Korea, and daily life is so grim and colorless, that people are desperate for any kind of escape. When you watch a movie, your imagination can carry you away for two whole hours. You come back refreshed, your struggles temporarily forgotten.

North Koreans have two stories running in their heads at all times, like trains on parallel tracks. One is what you are taught to believe; the other is what you see with your own eyes. It wasn’t until I escaped to South Korea and read a translation of George Orwell’s Nineteen Eighty-Four that I found a word for this peculiar condition: doublethink. This is the ability to hold two contradictory ideas in your mind at the same time—and somehow not go crazy. This “doublethink” is how you can shout slogans denouncing capitalism in the morning, then browse through the market in the afternoon to buy smuggled South Korean cosmetics. It is how you can believe that North Korea is a socialist paradise, the best country in the world with the happiest people who have nothing to envy, while devouring movies and TV programs that show ordinary people in enemy nations enjoying a level of prosperity that you couldn’t imagine in your dreams.

It is how you can recite the motto “Children Are King” in school, then walk home past the orphanage where children with bloated bellies stare at you with hungry eyes. Maybe deep, deep inside me I knew something was wrong. But we North Koreans can be experts at lying, even to ourselves. The frozen babies that starving mothers abandoned in the alleys did not fit into my worldview, so I couldn’t process what I saw. It was normal to see bodies in the trash heaps, bodies floating in the river, normal to just walk by and do nothing when a stranger cried for help. There are images I can never forget. Late one afternoon, my sister and I found the body of a young man lying beside a pond. It was a place where people went to fetch water, and he must have dragged himself there to drink. He was naked and his eyes were staring and his mouth wide open in an expression of terrible suffering. I had seen many dead bodies before, but this was the most horrible and frightening of all, because his insides were coming out where something—maybe dogs—had ripped him open.

There were so many desperate people on the streets crying for help that you had to shut off your heart or the pain would be too much. After a while you can’t care anymore. And that is what hell is like. Almost everybody I knew lost family in the famine. The youngest and oldest died first. Then the men, who had fewer reserves than women. Starving people wither away until they can no longer fight off diseases, or the chemicals in their blood become so unbalanced that their hearts forget to beat.

My father and I left on the morning train for Pyongyang. Even though the distance was only about 225 miles, the ride took days, because electricity shortages slowed down the train.

Just about every morning we woke up to the sound of the national anthem blaring on the government-supplied radio. Every household in North Korea had to have one, and you could never turn it off. It was tuned to only one station, and that’s how the government could control you even when you were in your own home. In the morning it played lots of enthusiastic songs with titles like “Strong and Prosperous Nation,” reminding us how lucky we were to celebrate our proud socialist life. I was surprised that the radio was on so much in Pyongyang. Back home, the electricity was usually off, so we had to wake ourselves up.

At seven in the morning, there was always a lady knocking on the door of the apartment in Pyongyang, yelling, “Get up! Time to clean!” She was head of the inminban, or “people’s unit,” that included every apartment in our part of the building. In North Korea, everybody is required to wake up early and spend an hour sweeping and scrubbing the hallways, or tending the area outside their houses. Communal labor is how we keep up our revolutionary spirit and work together as one people.

After the people of Pyongyang finish cleaning in the morning, they line up for the buses and go off to work. In the Northern provinces, not many people were going to work anymore because there was nothing left to do. The factories and mines had stopped operating and there was nothing to manufacture.

My father’s younger sister who lived in Hyesan had nothing to spare, and my uncle Park Jin was furious that my father had brought more trouble and disgrace to the family by getting himself arrested. It was so hurtful because my parents had always been generous with him and his family. Now we didn’t feel we could ask him for help.

Mother had to get back to Pyongyang to earn some money, and to try to help our father. The story she told us was terrifying: Soon after she arrived, she found he was being held at a detention and interrogation center called a ku ryujang. At first they wouldn’t let her see my father, but finally she was able to bribe one of the guards to get in. My father was in a shocking condition. He told her the police had tortured him by beating one place on his leg until it swelled up so badly that he could barely move. He couldn’t even get to the toilet. Then the guards tied him in a kneeling position with a wooden stick behind his knees, causing even more excruciating pain. They wanted to know how much he had sold to smugglers and who else was involved in the operation. But he told them very little. Later he was moved to Camp 11, the Chungsan “reeducation” labor camp northwest of Pyongyang. This type of facility is mostly for petty criminals or women who have been captured escaping North Korea. But these kinds of prisons can be as brutal as the felony-level and even the political prison camps in the North Korean gulag. In “reeducation” camps, the inmates are forced to work at hard labor all day, in the fields or in manufacturing jobs, on so little food that they have to fight over scraps and sometimes eat rats to survive.

Then they have to spend the evenings memorizing the Leaders’ speeches or engaging in endless self-criticism sessions. Although they have committed “crimes against the people,” these prisoners are thought to be redeemable, so they can be sent back to society once they have repented and finished an intensive refresher course in Kim Il Sung’s teachings. Sometimes prisoners are given a trial, sometimes not. But my mother thought it was a good sign that my father had been sent to one of these so-called lighter facilities. It gave her hope that we could all be together again soon.

The next day, though, we woke up to detectives pounding on our door. They had come to arrest my mother for questioning about my father’s crimes. But when the police officers saw that she had young children in the house, they took pity on us. They asked if she had any relatives who could take us while she was being interrogated, and she told them about my father’s brother, Uncle Jin. So the police asked the head of our inminban to find him and bring him to our house. When he arrived, they ordered him to care for us while our mother was being questioned, and then they led her away. For the next few days, she had to sit in a room at the prosecutors’ office in Hyesan day and night, writing statements about herself and my father and everything they had done wrong. Then a detective would read the pages and ask her more questions. At night they would simply lock the office door and go away. In the morning they came back to start the interrogation again. Finally, she was released. Eunmi and I were so grateful she wasn’t sent away to prison.

In 2005 my mother had to go into hiding, the police in Kowon were looking for her because she wasn’t paying them enough bribes. You can’t go where wyou want, the government has to give you permission to move outside your assigned district requiring a reason like job transfer, marriage, or divorce. So she turned herself into the police and was sentenced to a month of reeducation at a mobile slave labor camp building bridges and other heavy construction projects. There were only a few women but they had to work as hard as the men, and if anyone was too slow, the whole group had to run around the camp all night without any sleep as a punishment. To prevent that, the prisoners would beat one another if someone wasn’t working fast enough. The guards didn’t have to do a thing. Many were near death after a few weeks. It was the end of fall when mother was there, suffering from cold with just a thin jacket and no gloves.

My father got out of prison because he was so ill and yet had to live 8 flights up – the less money you have, the higher up you live. Since his ID card was destroyed when he went to prison he couldn’t go anywhere, so he couldn’t earn money buying metal to sell to smugglers, and had to constantly check in with police, who were keeping a close eye on him.

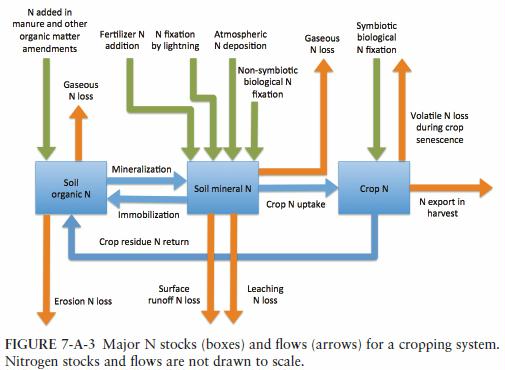

One of the big problems in North Korea was a fertilizer shortage. When the economy collapsed in the 1990s, the Soviet Union stopped sending fertilizer to us and our own factories stopped producing it. Whatever was donated from other countries couldn’t get to the farms because the transportation system had also broken down. this led to crop failures that made the famine even worse. So the government came up with a campaign to fill the fertilizer gap with a local and renewable source: human and animal waste. Every worker and schoolchild had a quota to fill. Every member of the household had a daily assignment, so when we got up in the morning, it was like a war. My aunts were the most competitive.

“Remember not to poop in school! Wait to do it here!” my aunt in Kowon told me every day.

Whenever my aunt in Songnam-ri traveled away from home and had to pop somewhere else, she loudly complained that she didn’t have a plastic bag with her to save it.

The big effort to collect waste peaked in January so it could be ready for growing season. Our bathrooms were usually far from the house, so you had to be careful. neighbors didn’t steal from you at night. Some people would lock up their outhouses to keep the poop thieves away. At school the teachers would send us out into the streets to find poop and carry it back to class. If we saw a dog pooping in the street, it was like gold. My uncle in Kowon had a big dog who made a big poop—and everyone in the family would fight over it.

ESCAPE TO CHINA

Park was reluctant to write this book or talk about this part of her life even when she reached South Korea. The fate of most women who escape is to become a bride since there are so many bachelors, epically men with physical or mental problems who are the least likely to find wives. Children are not considered Chinese citizens and can’t go to school or find work when they get older.

Along the way she and her mother are raped, and Park is forced to have sex with one of the North Korean traffickers selling women. Many women are sold into prostitution as well.

Men who cross work on farms for slave wages. They don’t complain because if the farmer notifies police they’ll be arrested and sent back to North Korea.

The Chinese government doesn’t want a flood of immigrants or to upset the leadership in Pyongyang. Especially since North Korea is a nuclear power on their border, and a buffer between China and the American presence in the South. Refugee status is not granted, all illegal immigrants are labeled “economic migrants” and shipped home.

Park had to learn a lot, how to use a toilet, toothbrush, wash her hands in a sink, take her first warm shower. For the first time every the lice in her hair were gone.

Eventually she too helped sell escaped North Korean women.

She eventually finds Christian missionaries who will help her get to Mongolia and onwards to South Korea, that barely works out.